Simpler tree simulation

jhmarcus

2019-05-02

Last updated: 2019-05-06

Checks: 5 1

Knit directory: drift-workflow/analysis/

This reproducible R Markdown analysis was created with workflowr (version 1.2.0). The Report tab describes the reproducibility checks that were applied when the results were created. The Past versions tab lists the development history.

The R Markdown file has unstaged changes. To know which version of the R Markdown file created these results, you’ll want to first commit it to the Git repo. If you’re still working on the analysis, you can ignore this warning. When you’re finished, you can run wflow_publish to commit the R Markdown file and build the HTML.

Great job! The global environment was empty. Objects defined in the global environment can affect the analysis in your R Markdown file in unknown ways. For reproduciblity it’s best to always run the code in an empty environment.

The command set.seed(20190211) was run prior to running the code in the R Markdown file. Setting a seed ensures that any results that rely on randomness, e.g. subsampling or permutations, are reproducible.

Great job! Recording the operating system, R version, and package versions is critical for reproducibility.

Nice! There were no cached chunks for this analysis, so you can be confident that you successfully produced the results during this run.

Great! You are using Git for version control. Tracking code development and connecting the code version to the results is critical for reproducibility. The version displayed above was the version of the Git repository at the time these results were generated.

Note that you need to be careful to ensure that all relevant files for the analysis have been committed to Git prior to generating the results (you can use wflow_publish or wflow_git_commit). workflowr only checks the R Markdown file, but you know if there are other scripts or data files that it depends on. Below is the status of the Git repository when the results were generated:

Ignored files:

Ignored: .Rhistory

Ignored: analysis/.Rhistory

Ignored: analysis/flash_cache/

Ignored: data.tar.gz

Ignored: data/datasets/

Ignored: data/raw/

Ignored: output.tar.gz

Ignored: output/

Unstaged changes:

Modified: analysis/ebnm_bimodal.Rmd

Modified: analysis/simpler_tree_simulation.Rmd

Deleted: code/ebnm_bimodal.R

Note that any generated files, e.g. HTML, png, CSS, etc., are not included in this status report because it is ok for generated content to have uncommitted changes.

These are the previous versions of the R Markdown and HTML files. If you’ve configured a remote Git repository (see ?wflow_git_remote), click on the hyperlinks in the table below to view them.

| File | Version | Author | Date | Message |

|---|---|---|---|---|

| Rmd | 44a10f7 | jhmarcus | 2019-05-05 | added bimodal ash exploration |

| html | 44a10f7 | jhmarcus | 2019-05-05 | added bimodal ash exploration |

| Rmd | 280cc1f | jhmarcus | 2019-05-03 | added printing of noise in simpler |

| html | 280cc1f | jhmarcus | 2019-05-03 | added printing of noise in simpler |

| Rmd | 912c5ff | jhmarcus | 2019-05-03 | added simpler tree sim |

| html | 912c5ff | jhmarcus | 2019-05-03 | added simpler tree sim |

In this analysis I simulate data from the same tree as described in Simple Tree Simulation (also see below) but parameterize the simulation as a factor analysis model i.e. simulating under the model we are fitting. I also removed the additional binomial sampling from the allele frequencies at the tips and just directly modeled Gaussian data.

Import

Here I import the some required packages:

library(ggplot2)

library(dplyr)

library(tidyr)

library(ashr)

library(flashier)

library(flashr)

source("../code/viz.R")

# bimodal g prior list used throughout

m = 20

b = seq(1.0, 0.0, length=m)

a = seq(0.0, 1.0, length=m)

bimodal_g = ashr:::unimix(rep(0, 2*m), c(rep(0, m),b), c(a, rep(1,m)))Functions

#' @title Simpler Tree Simulation

#'

#' @description Simulates genotypes under a simple population

#' tree as described in Pickrell and Pritchard 2012 via

#' a factor analysis model:

#'

#' https://journals.plos.org/plosgenetics/article?id=10.1371/journal.pgen.1002967

#'

#' @param n_per_pop number of individuals per population

#' @param p number of SNPs

#' @param sigma_e std. dev of noise

simpler_tree_simulation = function(n_per_pop, p, sigma_e){

n = n_per_pop * 4

L = matrix(0, nrow=4*n_per_pop, ncol=6)

L[1:n_per_pop, 2] = L[1:n_per_pop, 6] = 1

L[(n_per_pop + 1):(2*n_per_pop), 2] = L[(n_per_pop + 1):(2*n_per_pop), 5] = 1

L[(2*n_per_pop + 1):(3*n_per_pop), 1] = L[(2*n_per_pop + 1):(3*n_per_pop), 3] = 1

L[(3*n_per_pop + 1):(4*n_per_pop), 1] = L[(3*n_per_pop + 1):(4*n_per_pop), 4] = 1

Z = matrix(rnorm(p*6, 0, 1), nrow=p, ncol=6)

E = matrix(rnorm(n*p, 0, sigma_e), nrow=n, ncol=p)

Y = L %*% t(Z) + E

res = list(Y=Y, L=L, Z=Z)

return(res)

}

plot_flash_loadings = function(flash_fit, n_per_pop){

l_df = as.data.frame(flash_fit$loadings$normalized.loadings[[1]])

colnames(l_df) = 1:ncol(l_df)

l_df$ID = 1:nrow(l_df)

l_df$pop = c(rep("Pop1", n_per_pop), rep("Pop2", n_per_pop),

rep("Pop3", n_per_pop), rep("Pop4", n_per_pop))

gath_l_df = l_df %>% gather(K, value, -ID, -pop)

p1 = ggplot(gath_l_df, aes(x=ID, y=value, color=pop)) +

geom_point() +

facet_wrap(K~., scale="free") +

theme_bw()

p2 = structure_plot(gath_l_df,

colset="Set3",

facet_grp="pop",

facet_levels=paste0("Pop", 1:4),

keep_leg=TRUE,

fact_type="nonnegative")

return(list(p1=p1, p2=p2))

}Low Noise

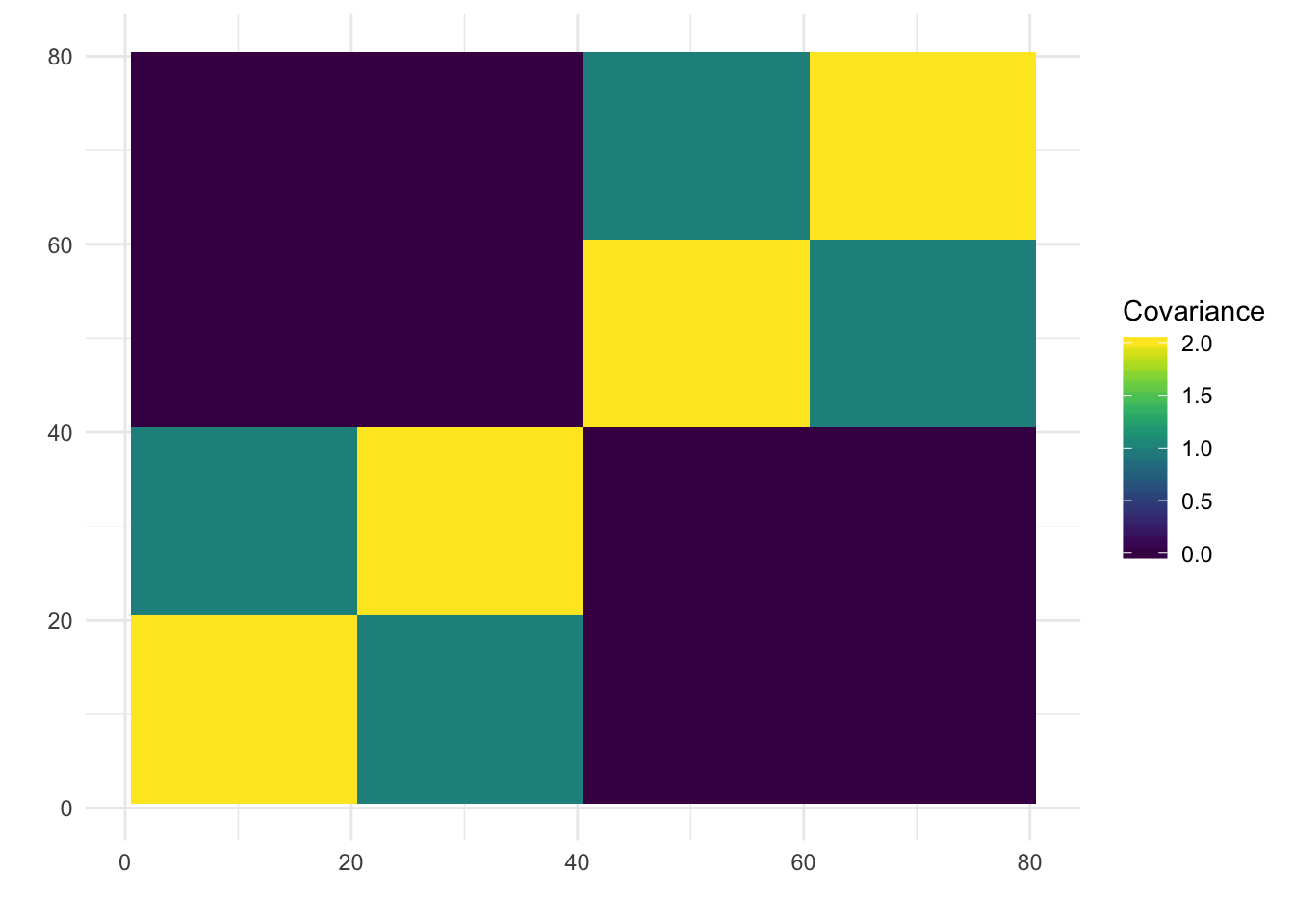

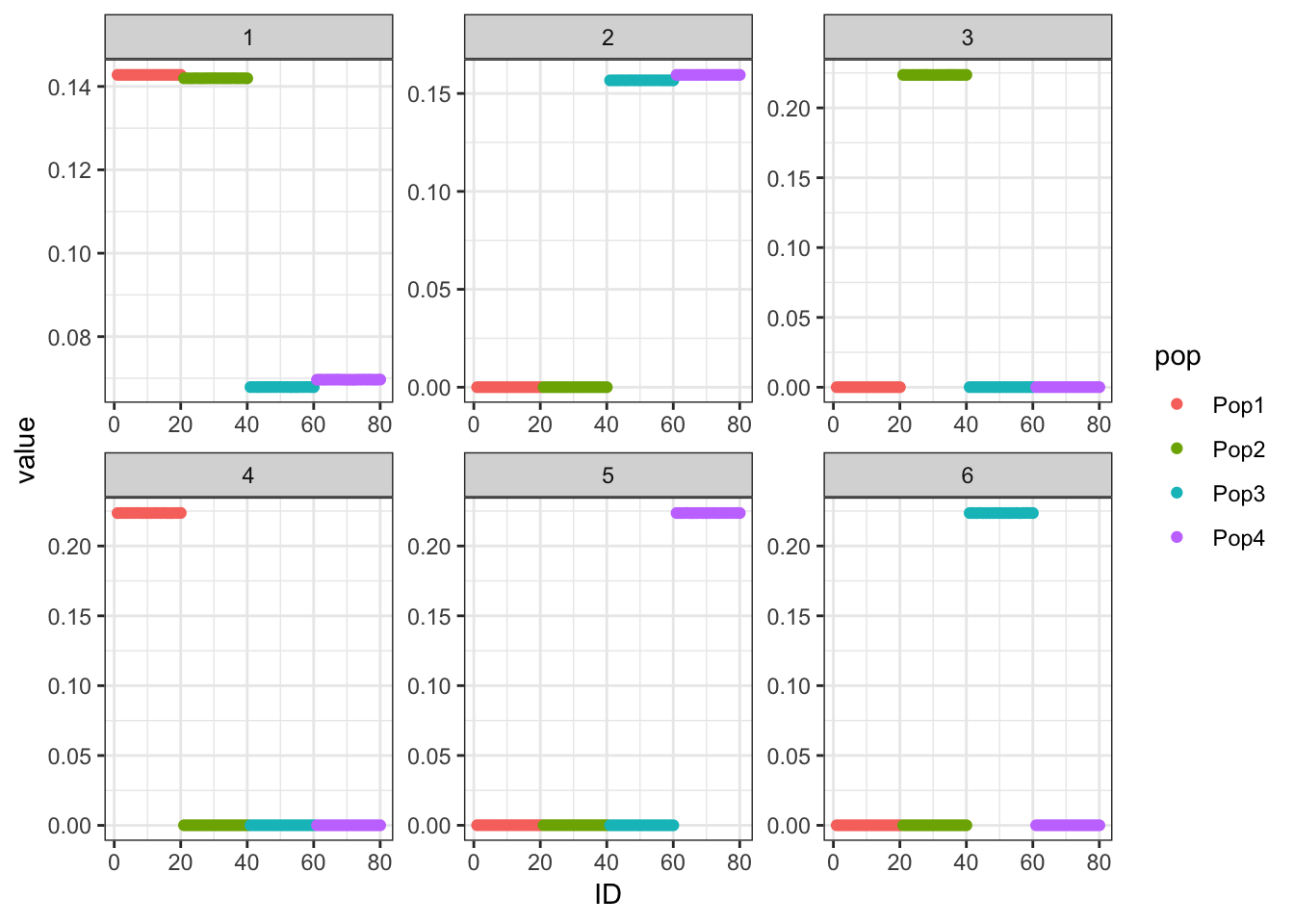

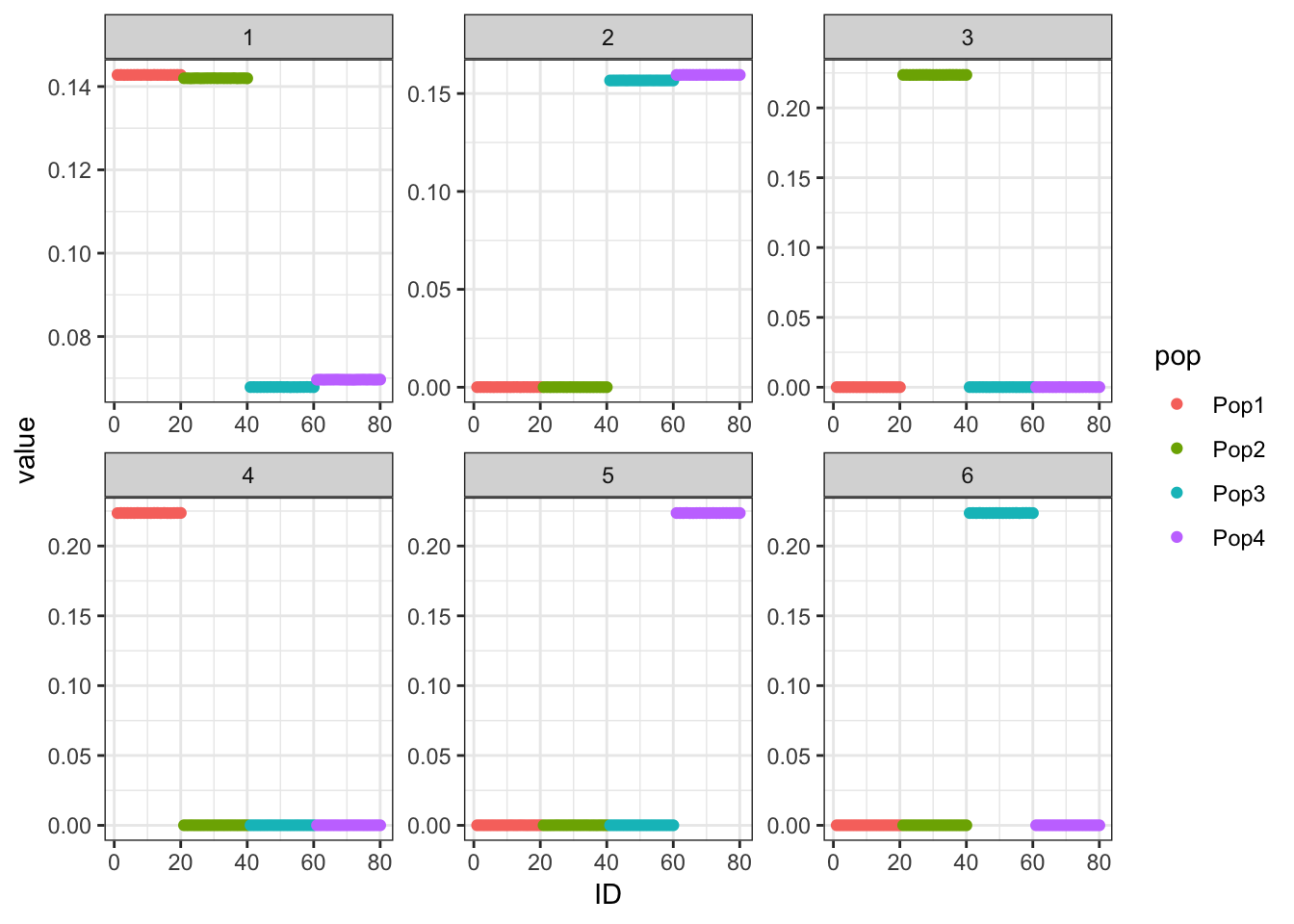

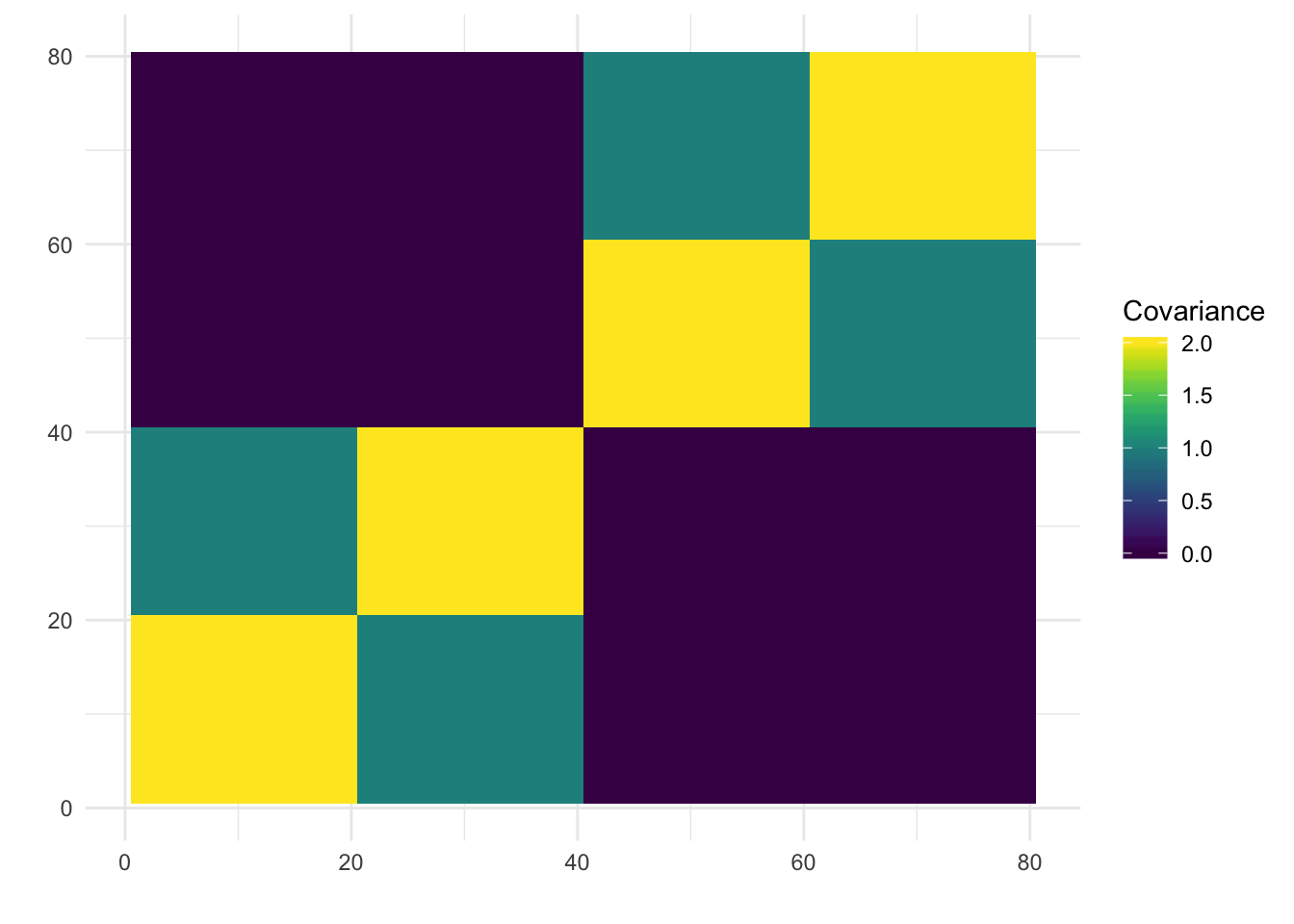

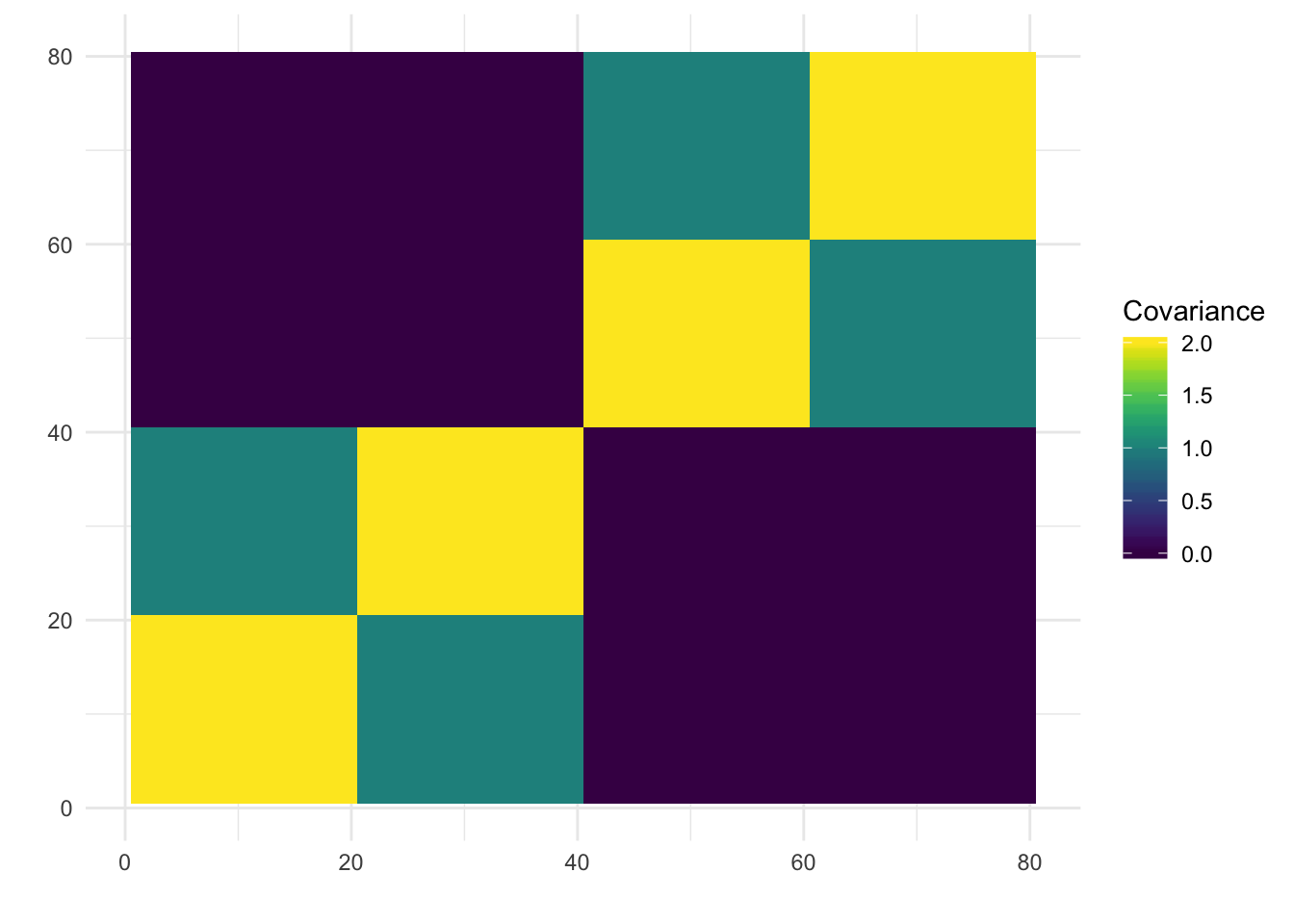

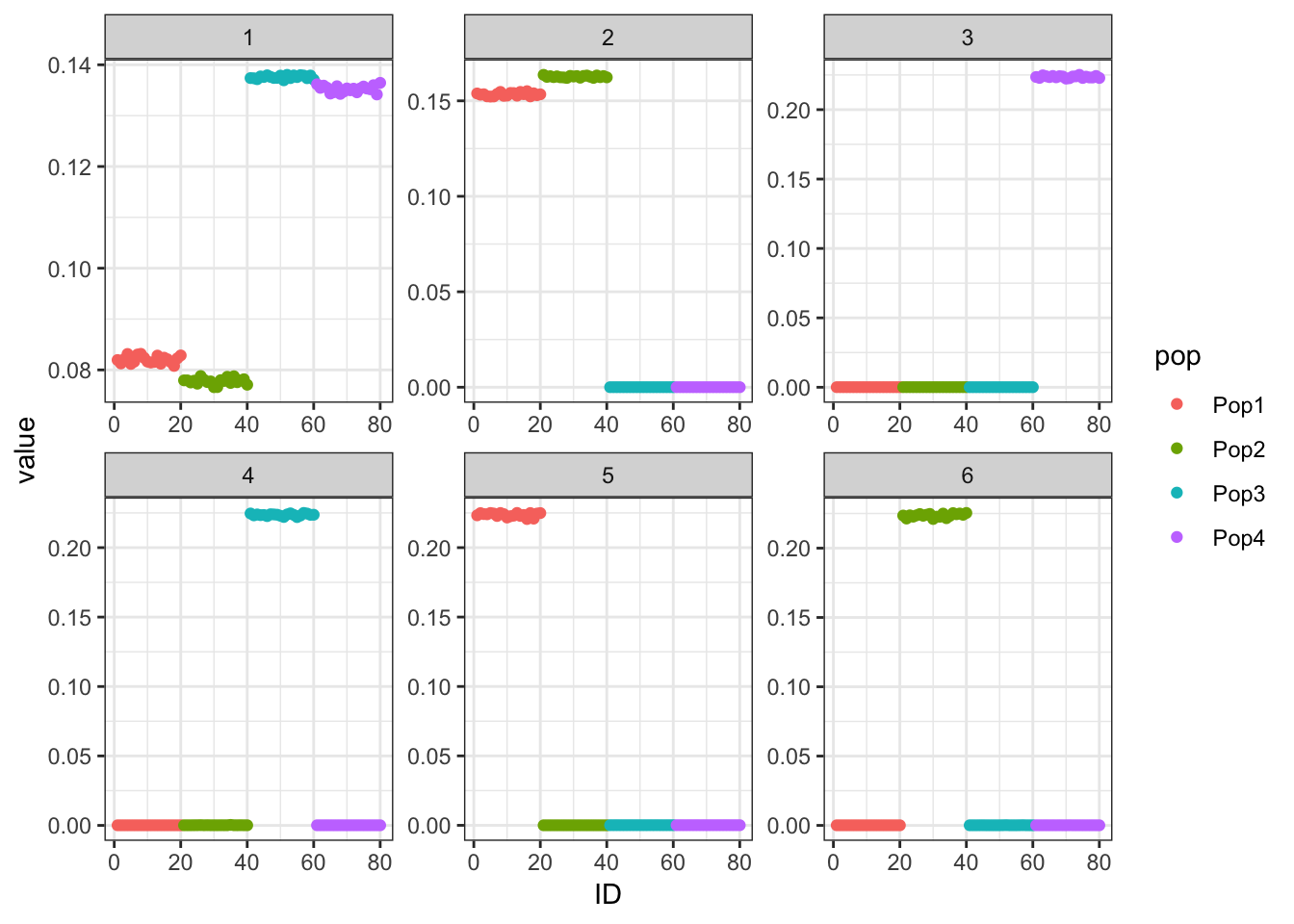

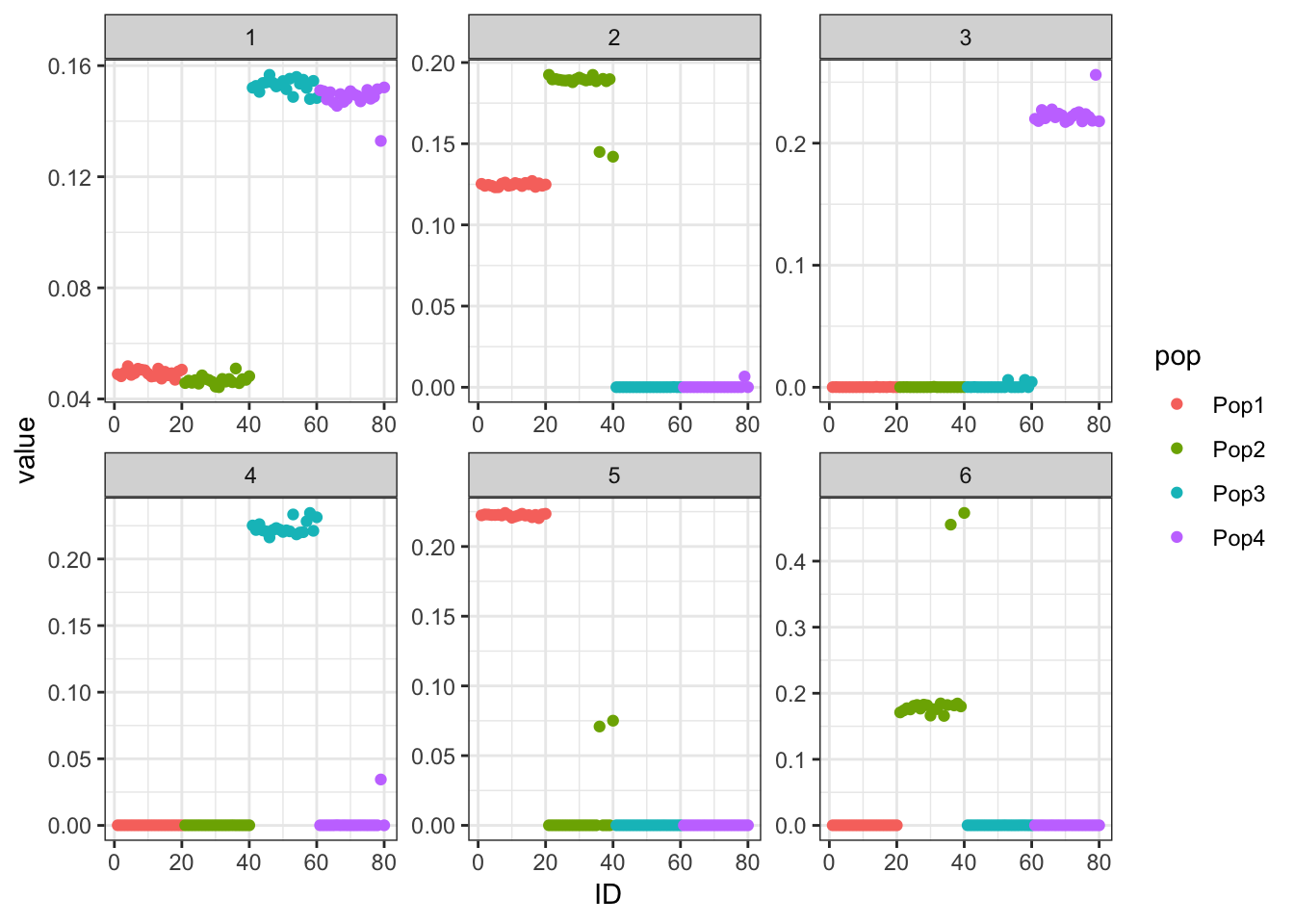

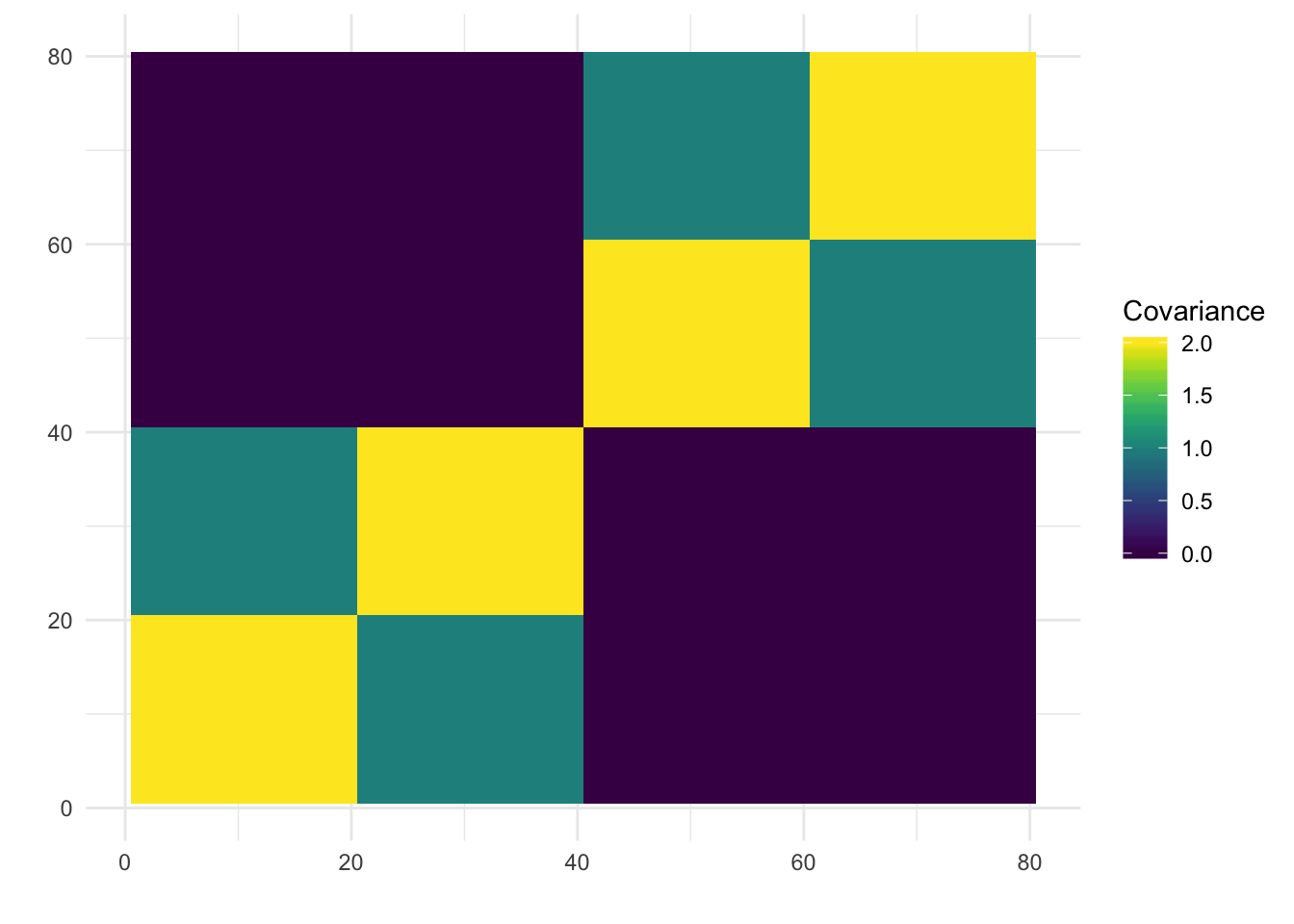

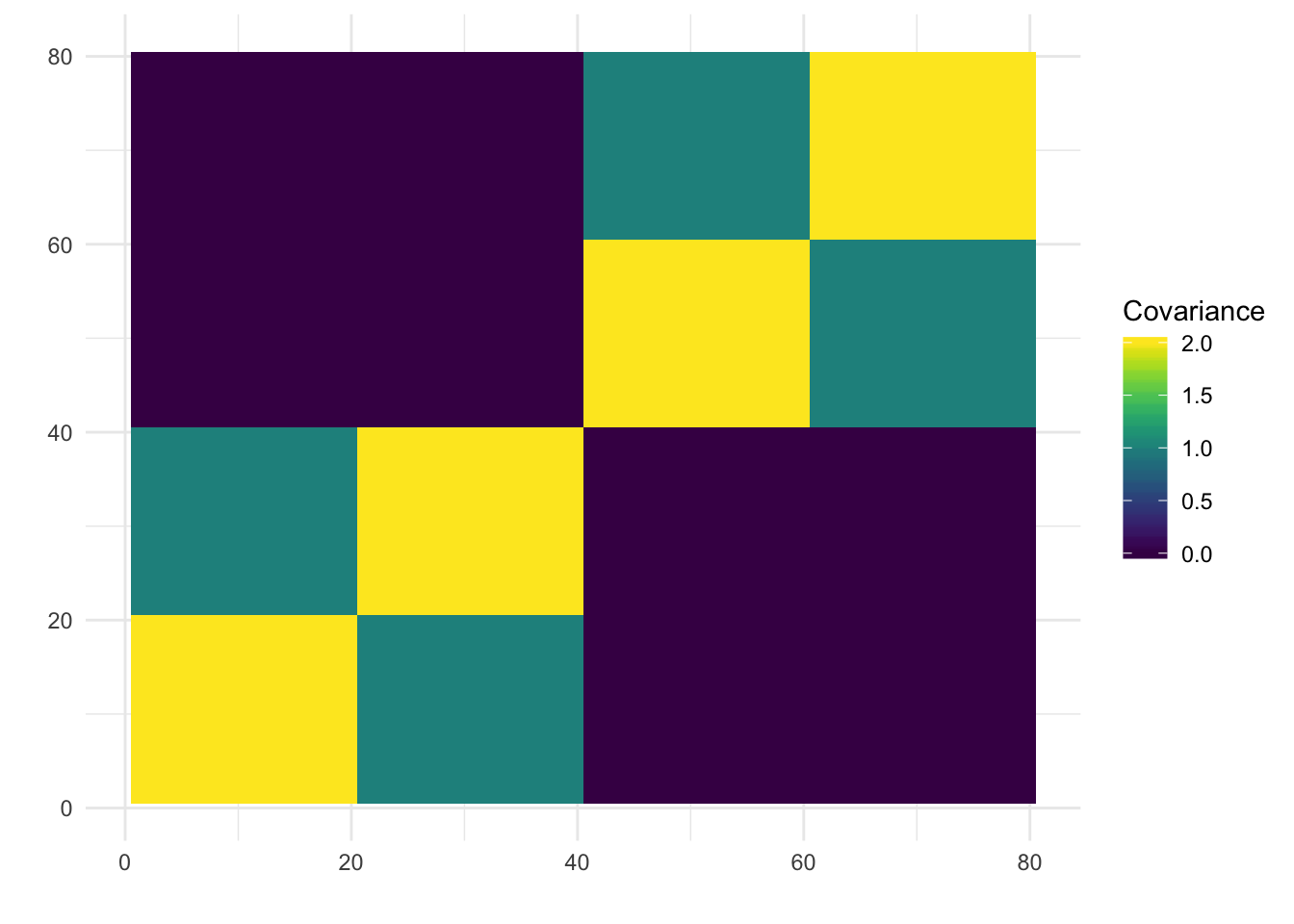

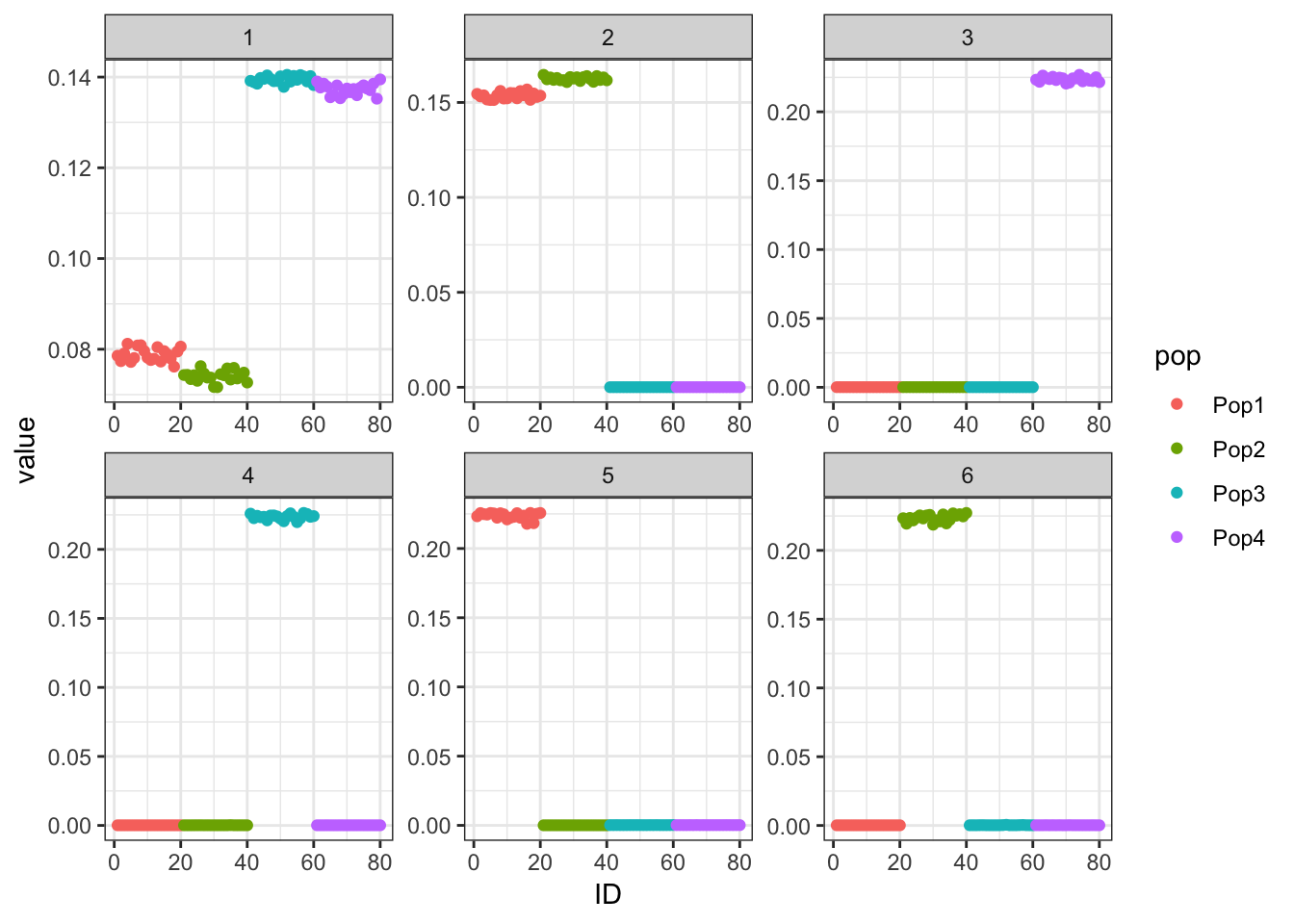

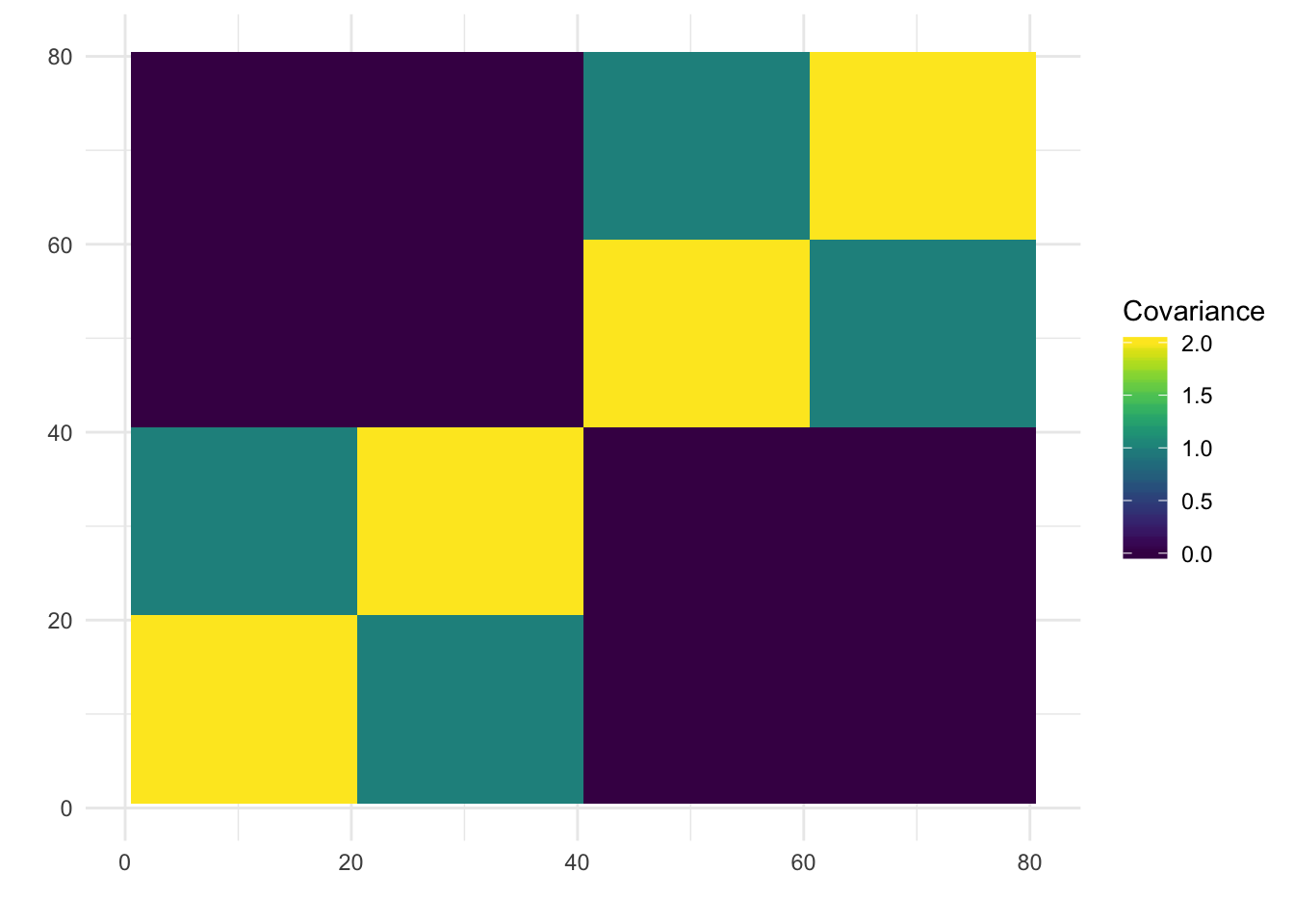

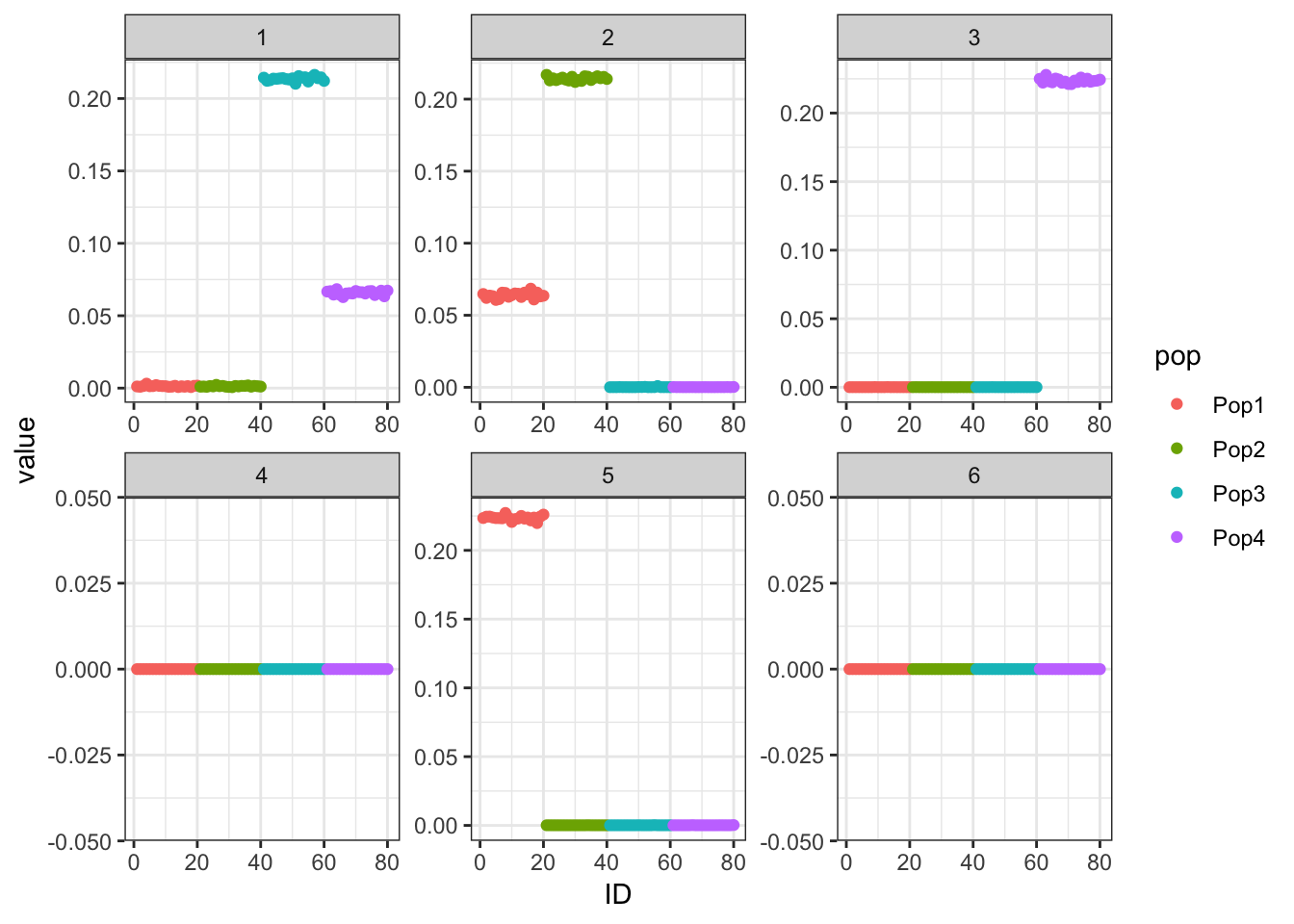

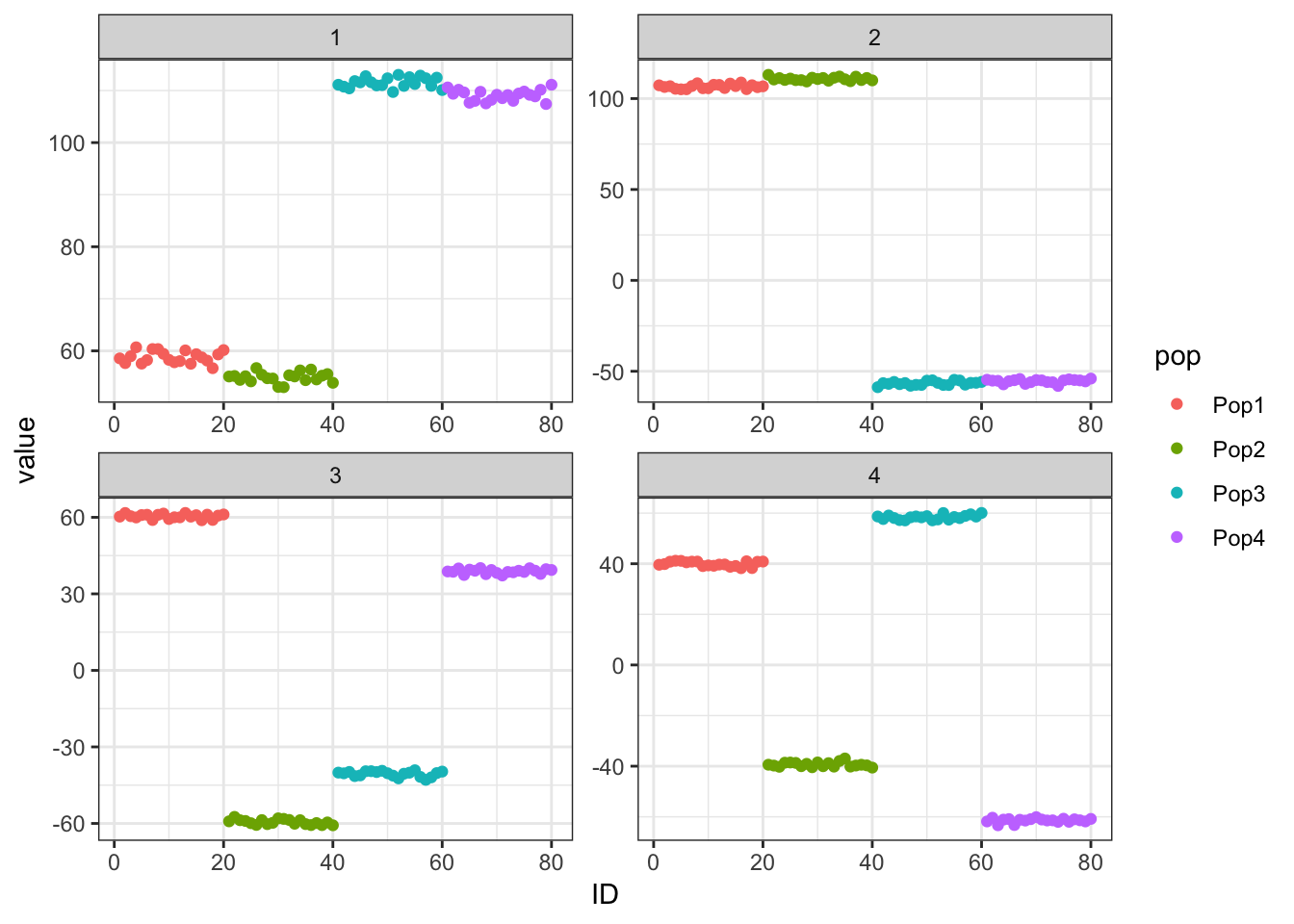

I display the true loadings matrix and population covariance matrix for a simulation of 20 individuals per population with 10000 independent SNPs.

set.seed(1234)

n_per_pop = 20

sigma_e = .01

p = 10000

sim_res = simpler_tree_simulation(n_per_pop, p, sigma_e)

Y = sim_res$Y

L = sim_res$L

LLt = L %*% t(L)

plot_covmat(LLt)

| Version | Author | Date |

|---|---|---|

| 912c5ff | jhmarcus | 2019-05-03 |

l_df = data.frame(L)

colnames(l_df) = paste0("K", 1:6)

l_df$iid = 1:nrow(L)

l_df$pop = c(rep("Pop1", n_per_pop), rep("Pop2", n_per_pop),

rep("Pop3", n_per_pop), rep("Pop4", n_per_pop))

gath_l_df = l_df %>% gather(K, value, -iid, -pop)

p = ggplot(gath_l_df, aes(x=iid, y=value, color=pop)) +

geom_point() +

facet_wrap(K~., scale="free") +

theme_bw()

p

| Version | Author | Date |

|---|---|---|

| 912c5ff | jhmarcus | 2019-05-03 |

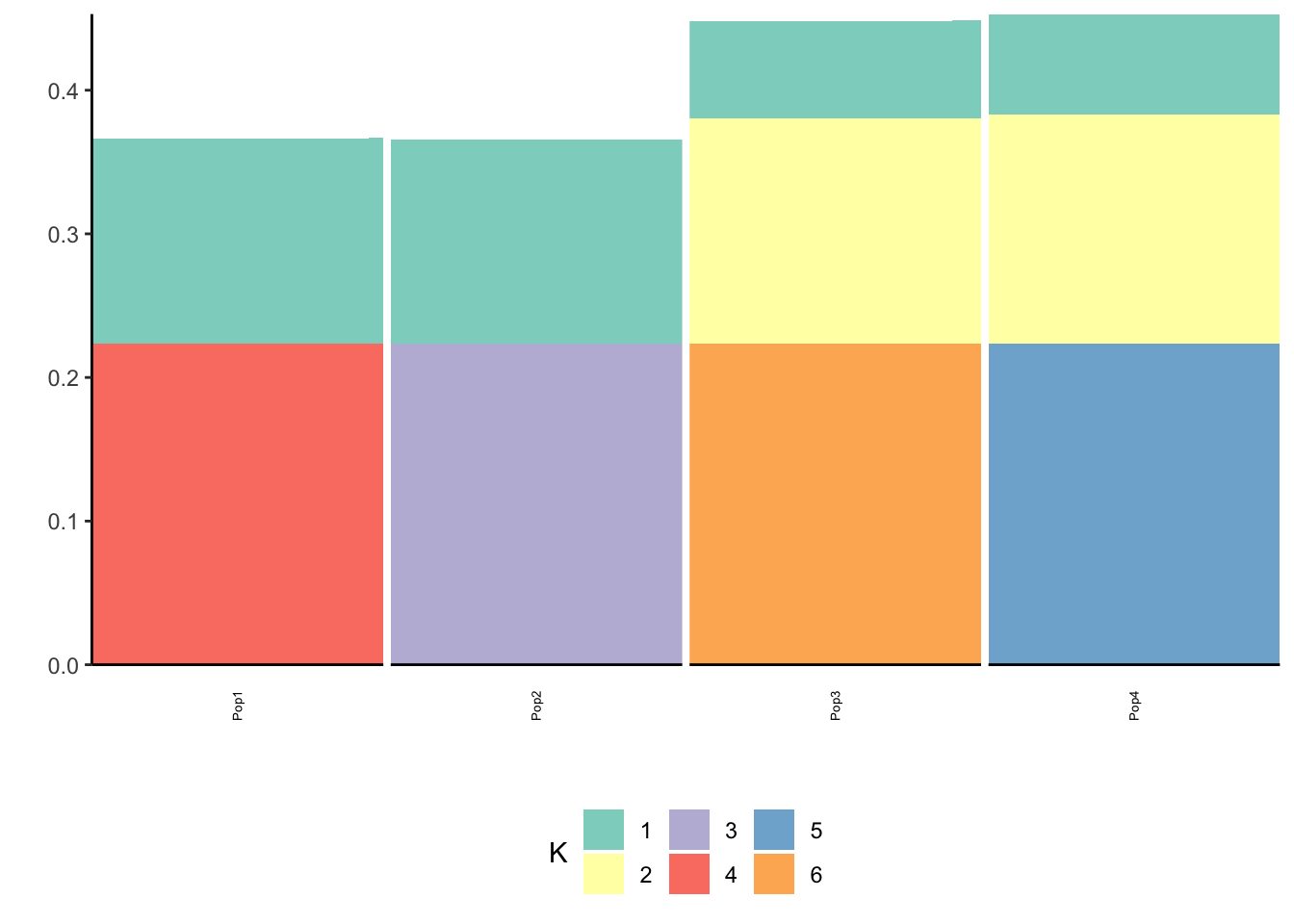

Here we can see the block-like structure to the covariance matrix.

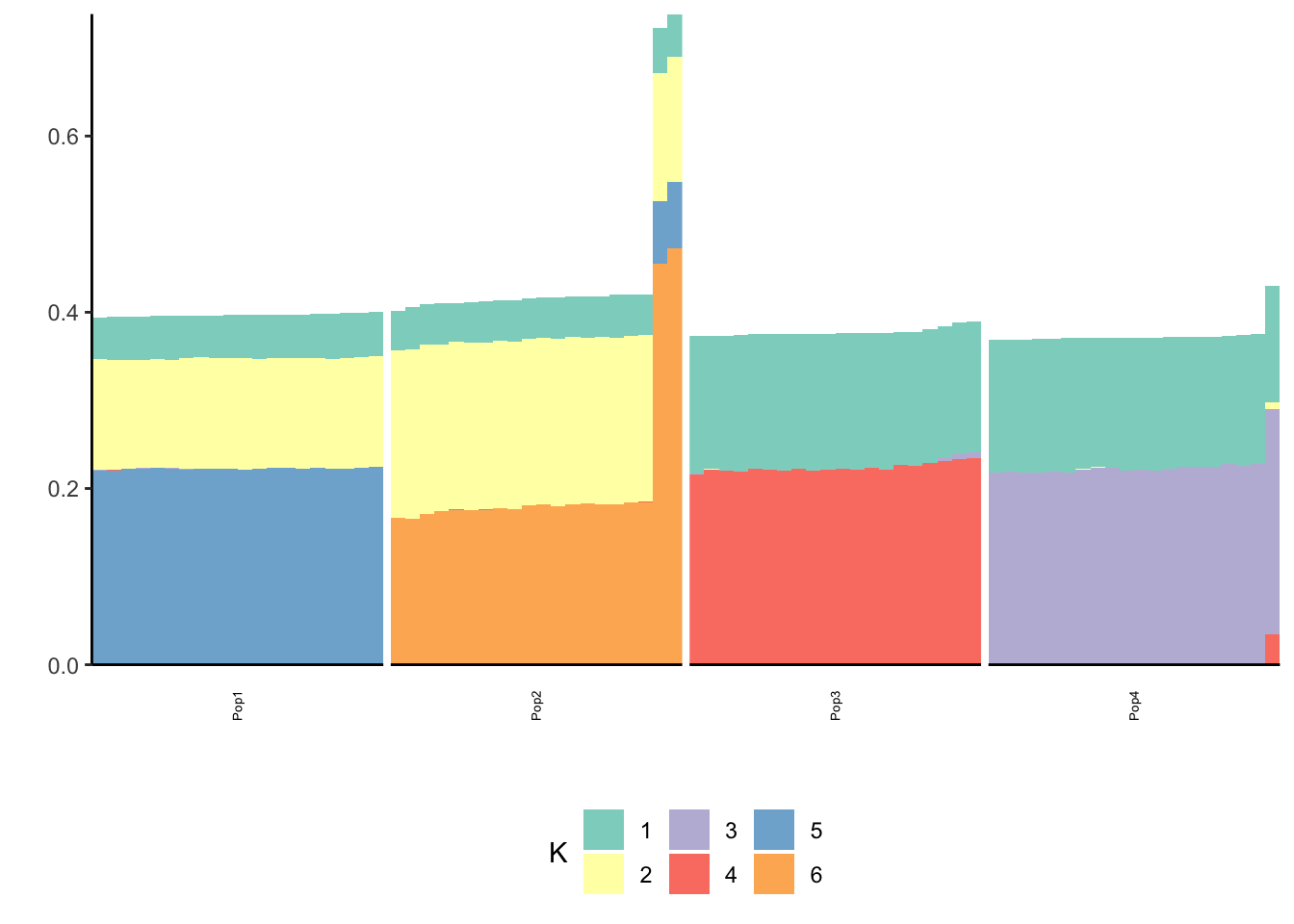

Greedy

I fit greedy flash:

flash_fit = flashier::flashier(Y,

greedy.Kmax=10,

prior.type=c("nonnegative", "point.normal"),

ebnm.param=list(fixg=TRUE, g=list(pi0=0, a=1, mu=0)),

var.type=0,

backfit="none")Initializing flash object...

Adding factor 1 to flash object...

Adding factor 2 to flash object...

Adding factor 3 to flash object...

Adding factor 4 to flash object...

Adding factor 5 to flash object...

Adding factor 6 to flash object...

Adding factor 7 to flash object...

Factor doesn't increase objective and won't be added.

Nullchecking 6 factors...

Wrapping up...

Done.Lhat = flash_fit$loadings$normalized.loadings[[1]]

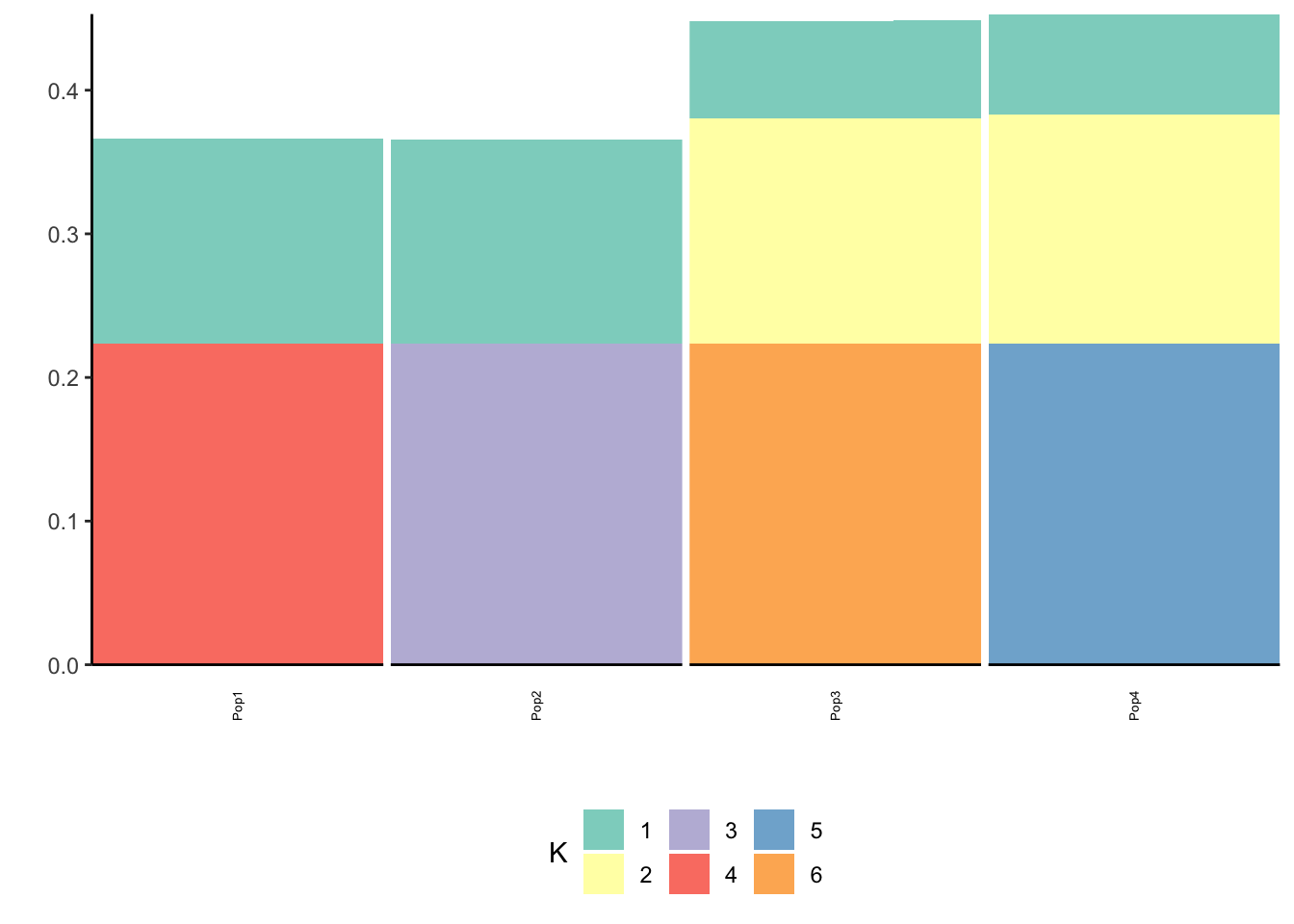

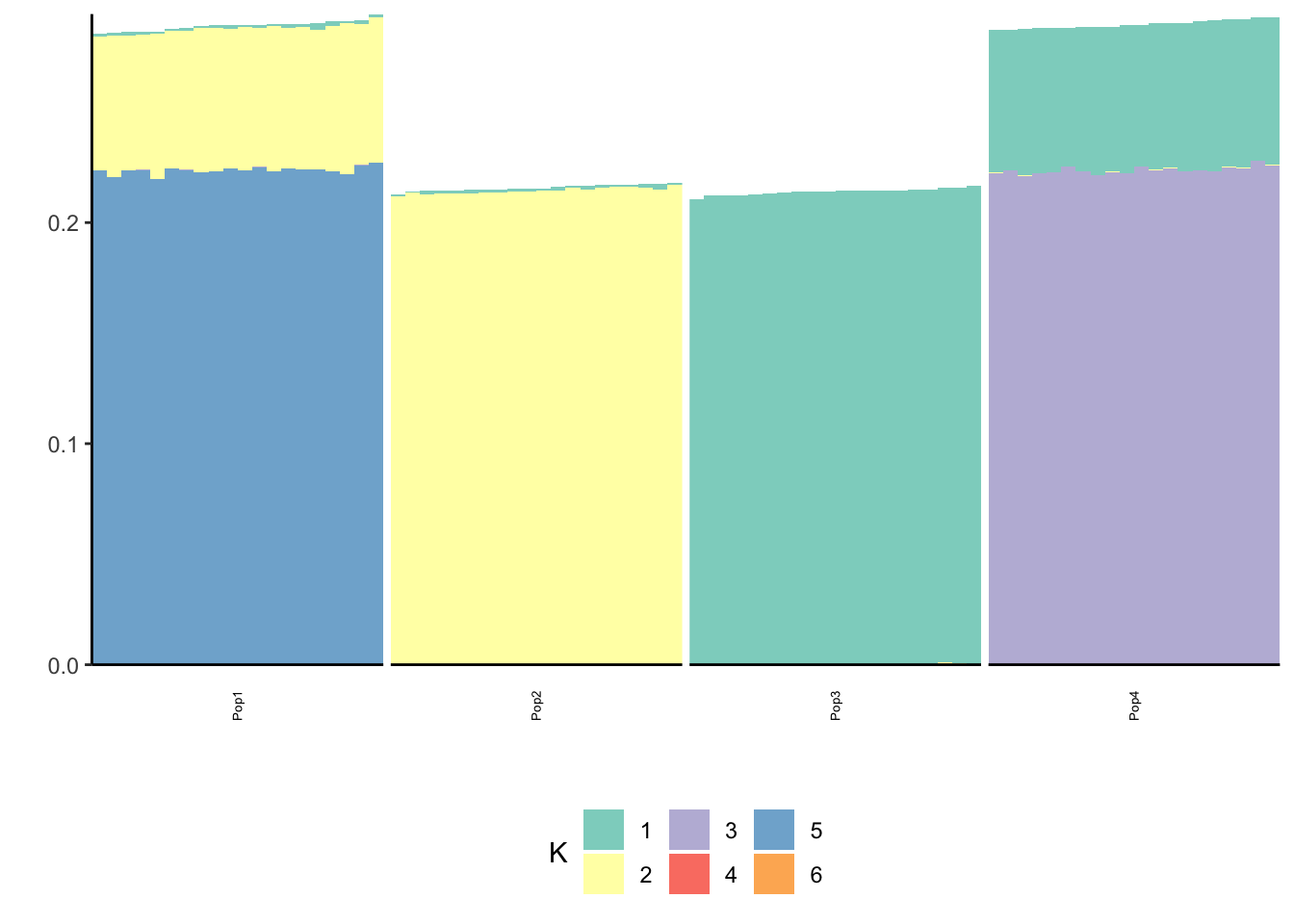

p_res = plot_flash_loadings(flash_fit, n_per_pop)

print(p_res$p1)

| Version | Author | Date |

|---|---|---|

| 912c5ff | jhmarcus | 2019-05-03 |

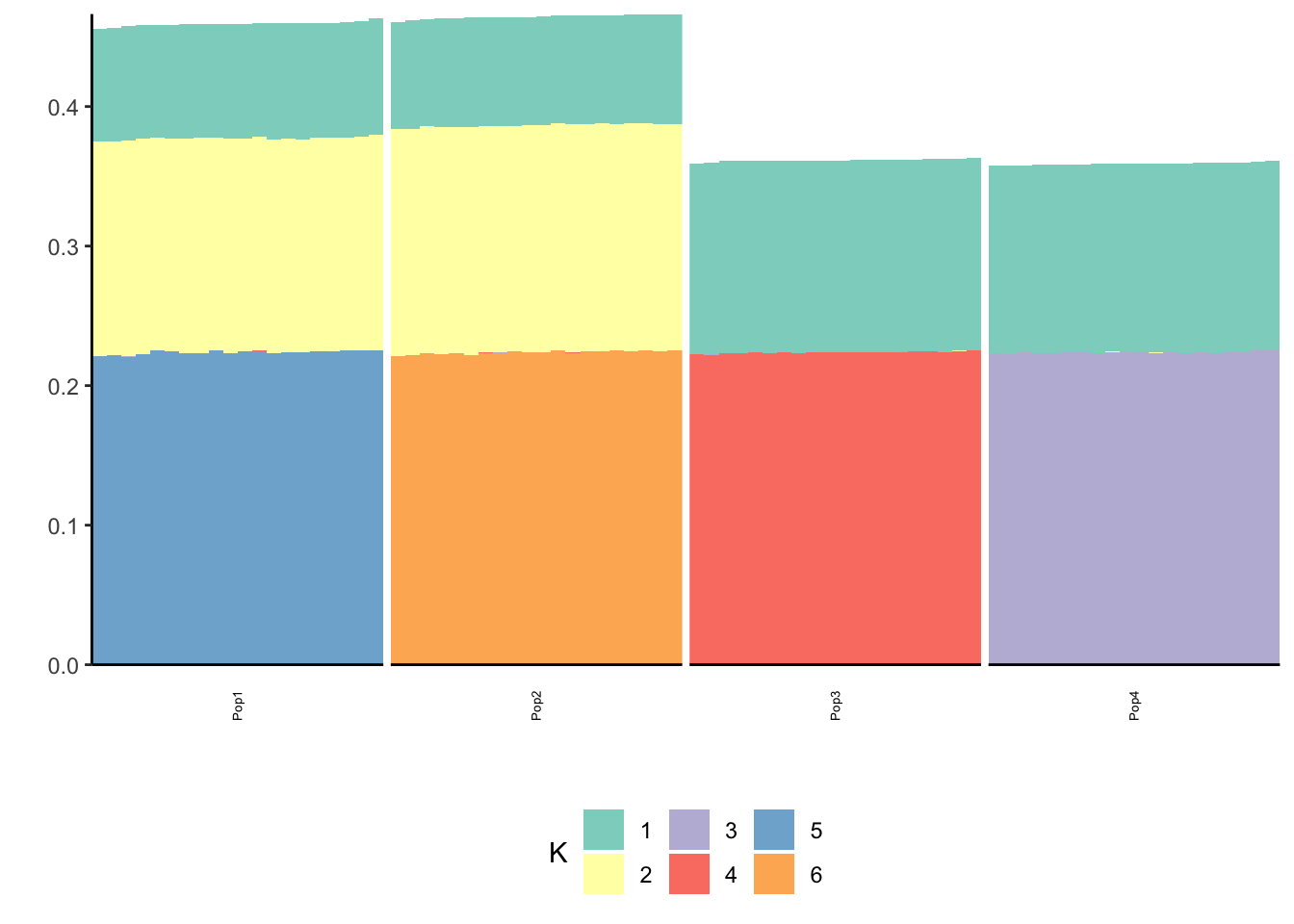

print(p_res$p2)

| Version | Author | Date |

|---|---|---|

| 912c5ff | jhmarcus | 2019-05-03 |

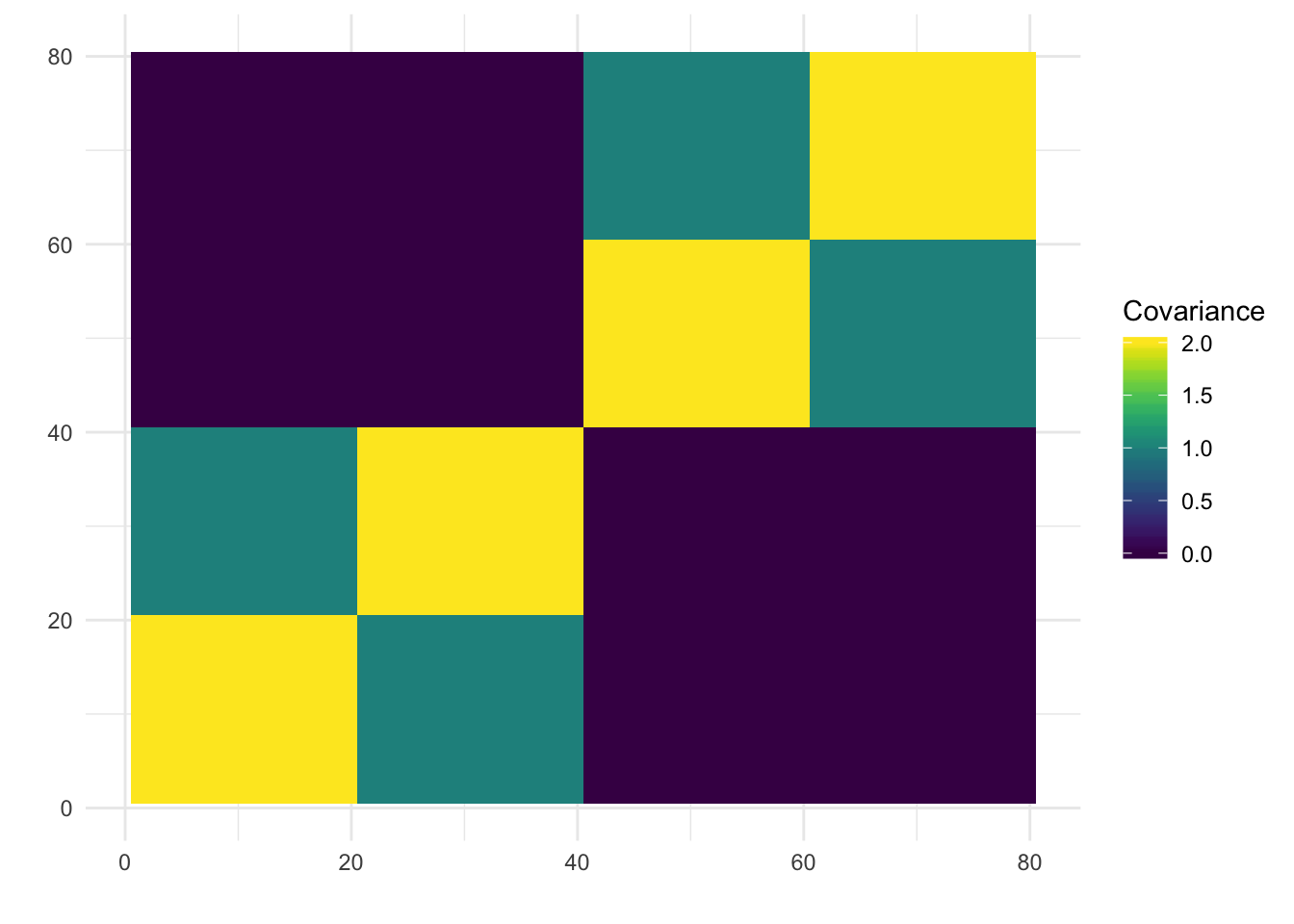

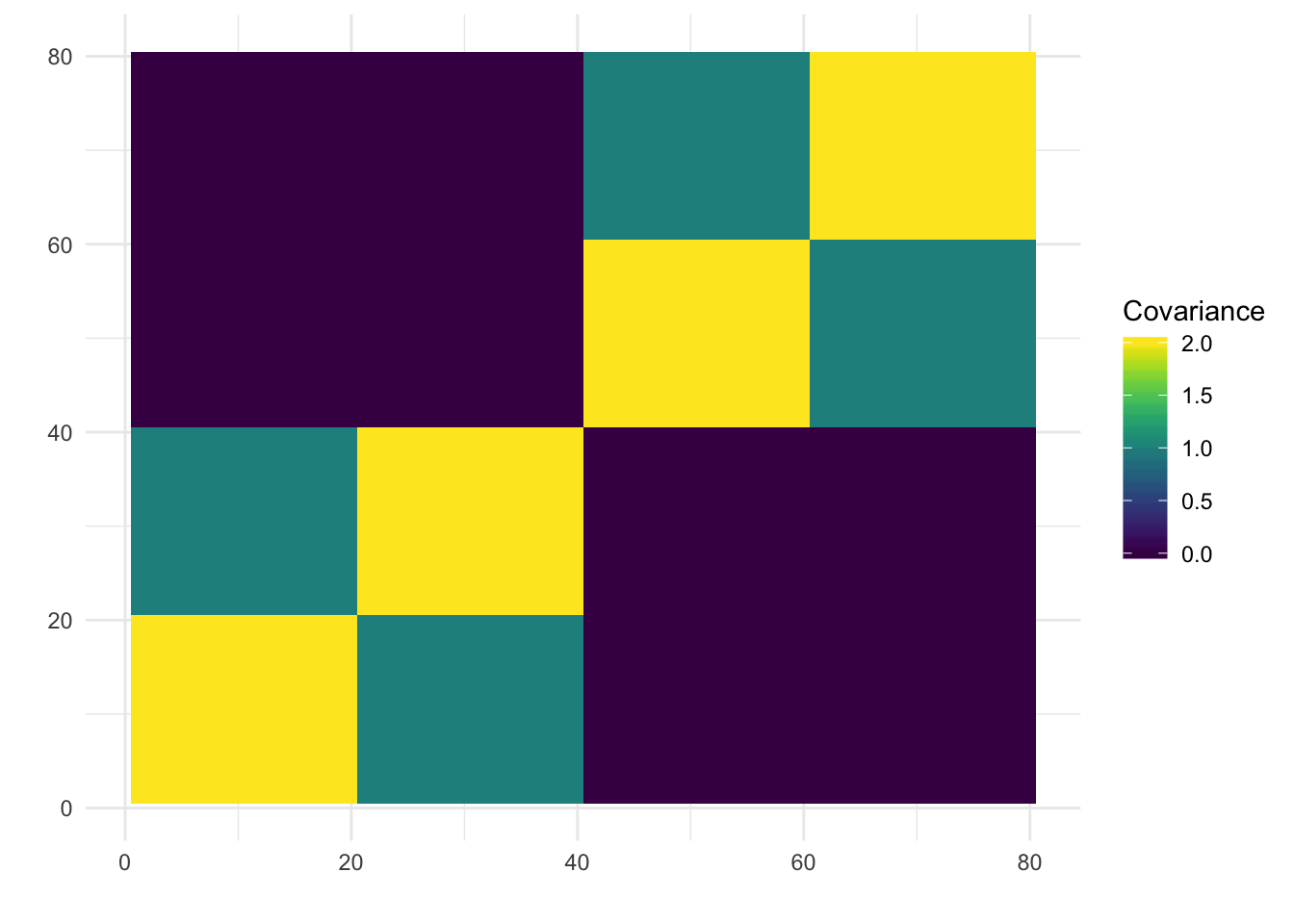

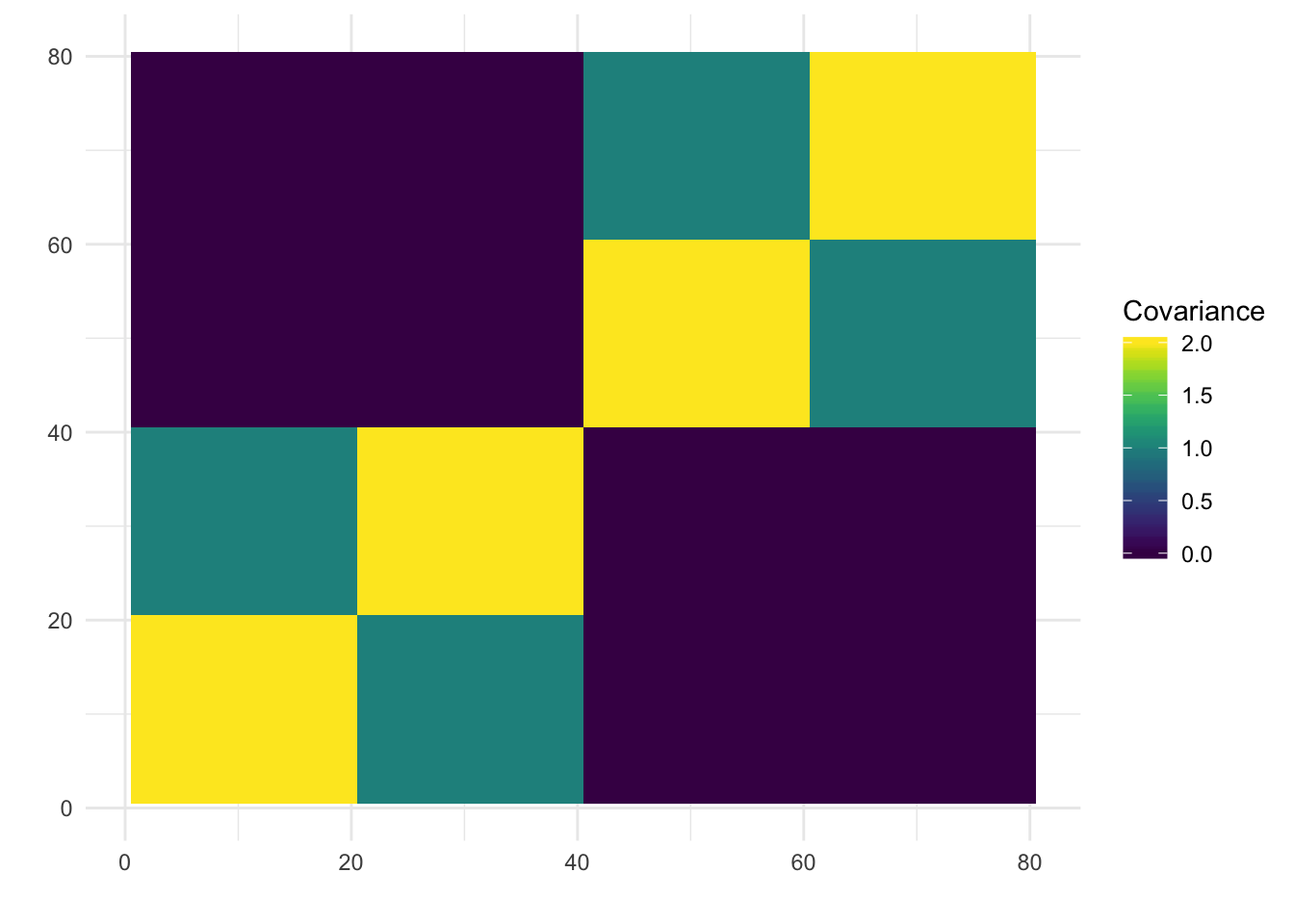

print(paste0("objective=", flash_fit$objective))[1] "objective=72473.5307279732"print(paste0("est_sd=", sqrt(1 / flash_fit$fit$tau)))[1] "est_sd=0.188101535612845"print(plot_covmat(Lhat %*% t(Lhat)))

| Version | Author | Date |

|---|---|---|

| 44a10f7 | jhmarcus | 2019-05-05 |

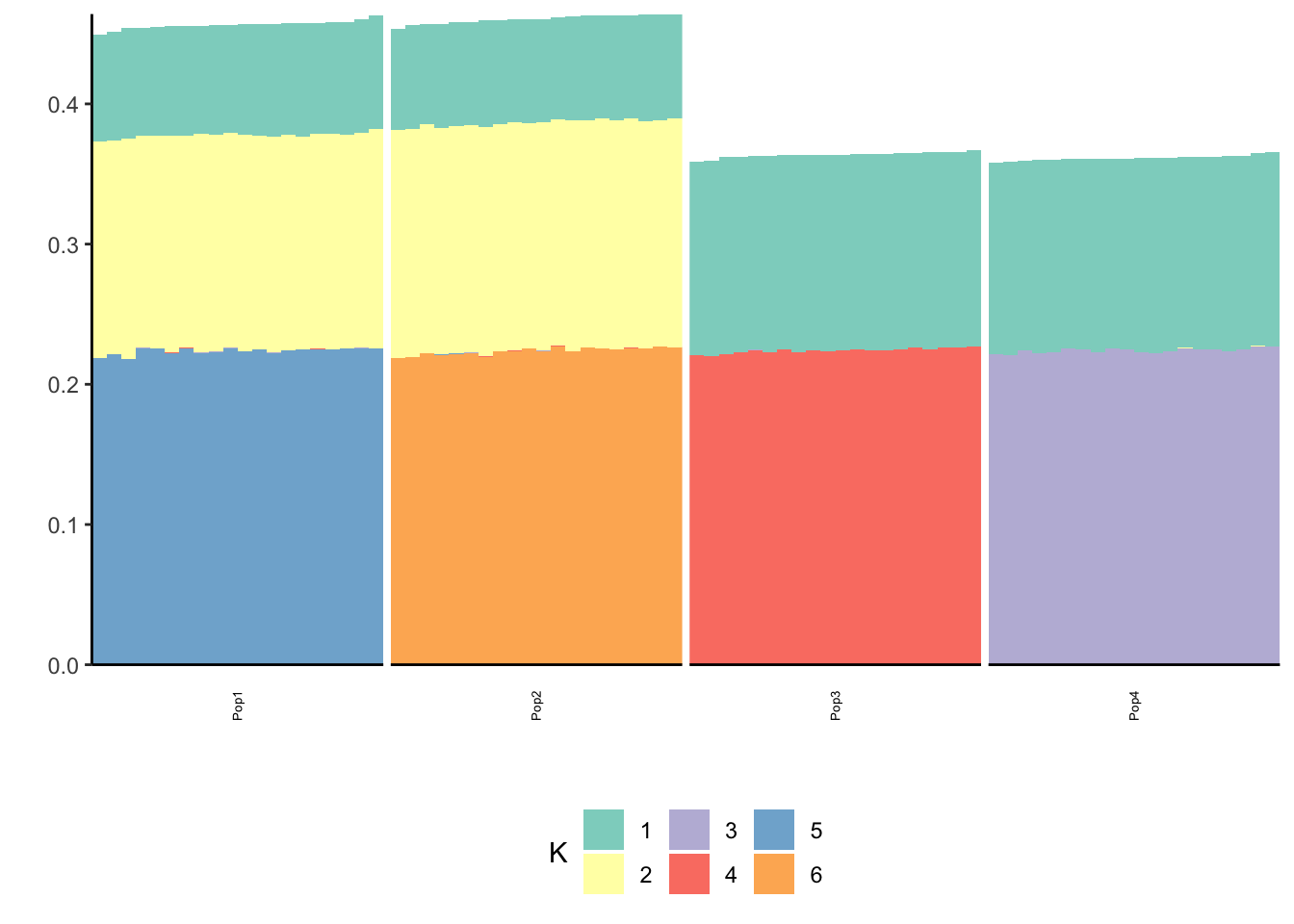

We can see the loadings matrix is recovered pretty well.

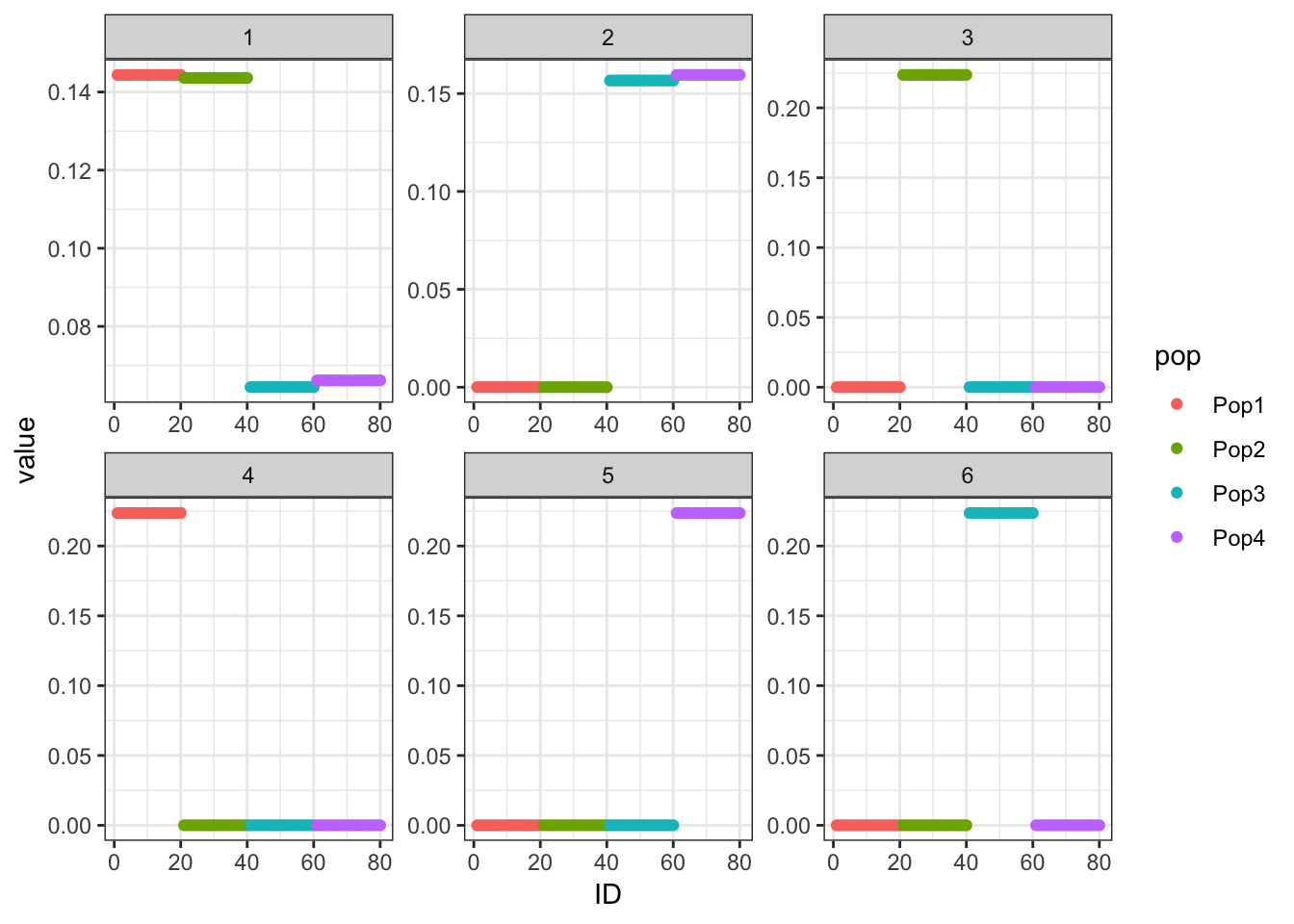

Backfit

I add a final backfitting step.

flash_fit = flashier::flashier(Y,

flash.init = flash_fit,

prior.type=c("nonnegative", "point.normal"),

ebnm.param=list(fixg=TRUE, g=list(pi0=0, a=1, mu=0)),

var.type=0,

backfit="final",

backfit.order="dropout",

backfit.reltol=10)Initializing flash object...

Adding factor 7 to flash object...

Factor doesn't increase objective and won't be added.

Backfitting 6 factors...

Nullchecking 6 factors...

Wrapping up...

Done.Lhat = flash_fit$loadings$normalized.loadings[[1]]

p_res = plot_flash_loadings(flash_fit, n_per_pop)

print(p_res$p1)

| Version | Author | Date |

|---|---|---|

| 912c5ff | jhmarcus | 2019-05-03 |

print(p_res$p2)

| Version | Author | Date |

|---|---|---|

| 912c5ff | jhmarcus | 2019-05-03 |

print(paste0("objective=", flash_fit$objective))[1] "objective=2169849.35240762"print(paste0("est_sd=", sqrt(1 / flash_fit$fit$tau)))[1] "est_sd=0.0101417353225806"print(plot_covmat(Lhat %*% t(Lhat)))

| Version | Author | Date |

|---|---|---|

| 44a10f7 | jhmarcus | 2019-05-05 |

The loadings matrix is recovered pretty well and also looks pretty similar to the greedy run

Backfit (bimodal)

I add a final backfitting step to a flash model with a bimodal family of prior distributions for the loadings with modes at 0 and 1 and the factors have normal priors with a scale for each factor to be estimated.

flash_fit = flashier::flashier(Y,

greedy.Kmax=10,

prior.type=c("nonnegative", "point.normal"),

ash.param=list(fixg=FALSE, g=bimodal_g),

ebnm.param=list(fix_pi0=TRUE, g=list(pi0=0)),

var.type=0,

backfit="final",

backfit.order="dropout",

backfit.reltol=10)Initializing flash object...

Adding factor 1 to flash object...

Adding factor 2 to flash object...

Adding factor 3 to flash object...

Adding factor 4 to flash object...

Adding factor 5 to flash object...

Adding factor 6 to flash object...

Adding factor 7 to flash object...

Factor doesn't increase objective and won't be added.

Backfitting 6 factors...

Nullchecking 6 factors...

Wrapping up...

Done.Lhat = flash_fit$loadings$normalized.loadings[[1]]

p_res = plot_flash_loadings(flash_fit, n_per_pop)

print(p_res$p1)

print(p_res$p2)

print(paste0("objective=", flash_fit$objective))[1] "objective=2171002.56418703"print(paste0("est_sd=", sqrt(1 / flash_fit$fit$tau)))[1] "est_sd=0.0101417265735237"print(plot_covmat(Lhat %*% t(Lhat)))

Medium Noise

Now I simulate data with a standard deviation of the errors set to be 5 times higher than the last simulation:

n_per_pop = 20

sigma_e = .5

p = 10000

sim_res = simpler_tree_simulation(n_per_pop, 10000, sigma_e)

Y = sim_res$YGreedy

flash_fit = flashier::flashier(Y,

greedy.Kmax=10,

prior.type=c("nonnegative", "point.normal"),

ebnm.param=list(fixg=TRUE, g=list(pi0=0, a=1, mu=0)),

var.type=0,

backfit="none")Initializing flash object...

Adding factor 1 to flash object...

Adding factor 2 to flash object...

Adding factor 3 to flash object...

Adding factor 4 to flash object...

Adding factor 5 to flash object...

Adding factor 6 to flash object...

Adding factor 7 to flash object...

Factor doesn't increase objective and won't be added.

Nullchecking 6 factors...

Wrapping up...

Done.Lhat = flash_fit$loadings$normalized.loadings[[1]]

p_res = plot_flash_loadings(flash_fit, n_per_pop)

print(p_res$p1)

| Version | Author | Date |

|---|---|---|

| 912c5ff | jhmarcus | 2019-05-03 |

print(p_res$p2)

| Version | Author | Date |

|---|---|---|

| 912c5ff | jhmarcus | 2019-05-03 |

print(paste0("objective=", flash_fit$objective))[1] "objective=-749201.861202199"print(paste0("est_sd=", sqrt(1 / flash_fit$fit$tau)))[1] "est_sd=0.540899300207415"print(plot_covmat(Lhat %*% t(Lhat)))

| Version | Author | Date |

|---|---|---|

| 44a10f7 | jhmarcus | 2019-05-05 |

The greedy solution recovers the loadings pretty well.

Backfit

flash_fit = flashier::flashier(Y,

flash.init = flash_fit,

prior.type=c("nonnegative", "point.normal"),

ebnm.param=list(fixg=TRUE, g=list(pi0=0, a=1, mu=0)),

var.type=0,

backfit="final",

backfit.order="dropout",

backfit.reltol=10)Initializing flash object...

Adding factor 7 to flash object...

Factor doesn't increase objective and won't be added.

Backfitting 6 factors...

Nullchecking 6 factors...

Wrapping up...

Done.Lhat = flash_fit$loadings$normalized.loadings[[1]]

p_res = plot_flash_loadings(flash_fit, n_per_pop)

print(p_res$p1)

print(p_res$p2)

print(paste0("objective=", flash_fit$objective))[1] "objective=-712470.602509237"print(paste0("est_sd=", sqrt(1 / flash_fit$fit$tau)))[1] "est_sd=0.50320097987893"print(plot_covmat(Lhat %*% t(Lhat)))

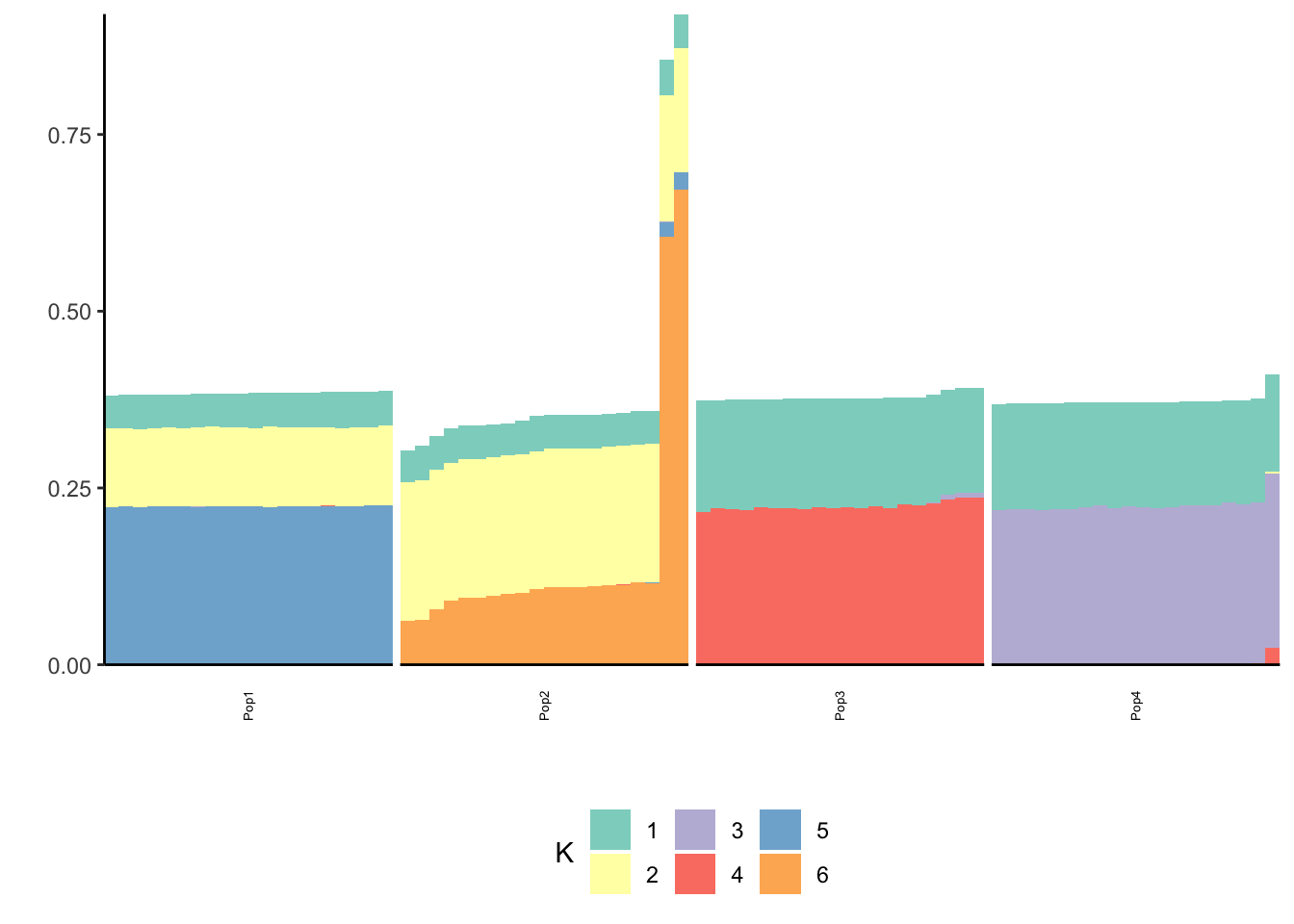

The backfitting begins to get funky / noisy.

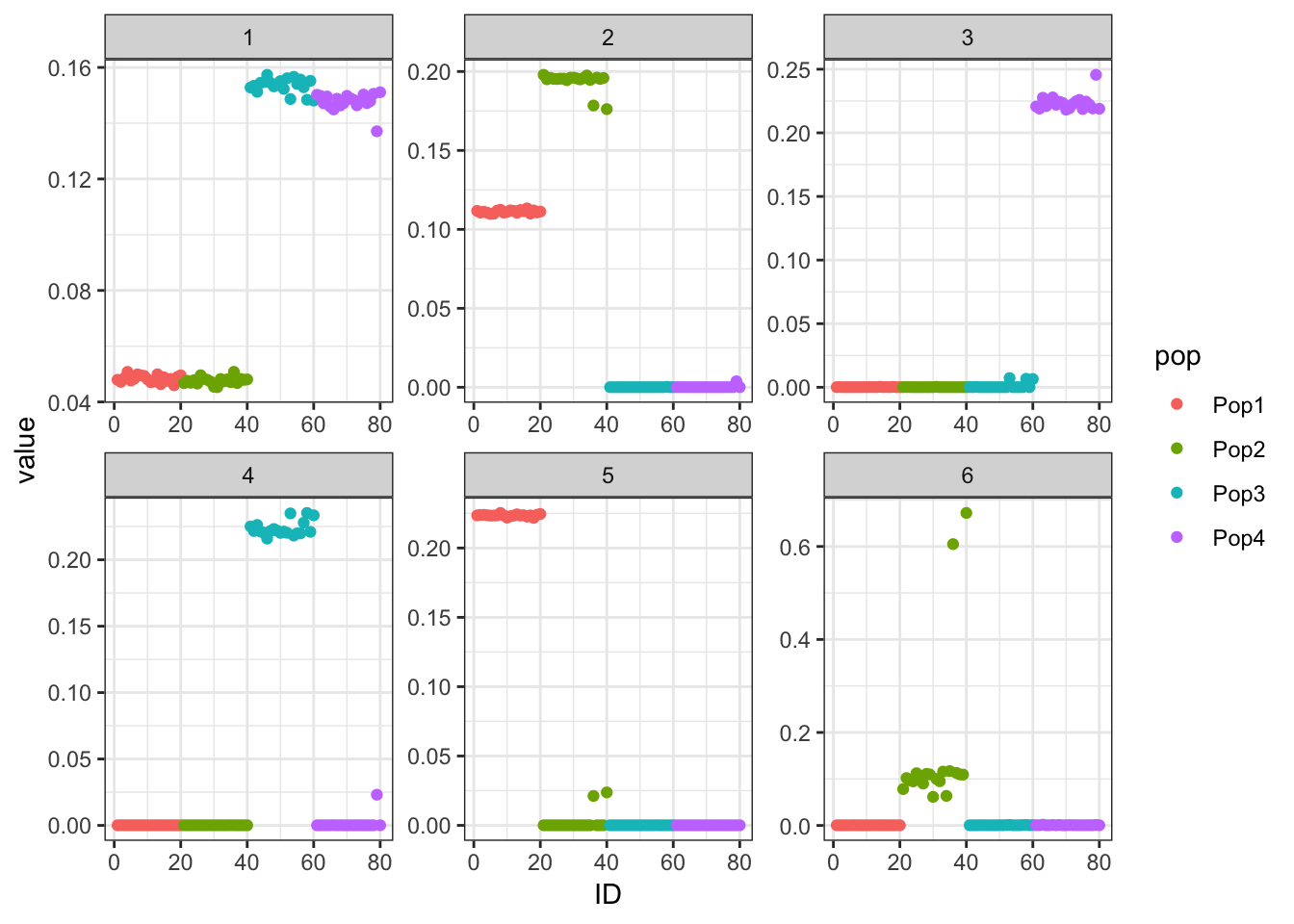

Backfit (bimodal)

flash_fit = flashier::flashier(Y,

greedy.Kmax=10,

prior.type=c("nonnegative", "point.normal"),

ash.param=list(fixg=FALSE, g=bimodal_g),

ebnm.param=list(fix_pi0=TRUE, g=list(pi0=0)),

var.type=0,

backfit="final",

backfit.order="dropout",

backfit.reltol=10)Initializing flash object...

Adding factor 1 to flash object...

Adding factor 2 to flash object...

Adding factor 3 to flash object...

Adding factor 4 to flash object...

Adding factor 5 to flash object...

Adding factor 6 to flash object...

Adding factor 7 to flash object...

Factor doesn't increase objective and won't be added.

Backfitting 6 factors...

Nullchecking 6 factors...

Wrapping up...

Done.Lhat = flash_fit$loadings$normalized.loadings[[1]]

p_res = plot_flash_loadings(flash_fit, n_per_pop)

print(p_res$p1)

| Version | Author | Date |

|---|---|---|

| 912c5ff | jhmarcus | 2019-05-03 |

print(p_res$p2)

| Version | Author | Date |

|---|---|---|

| 912c5ff | jhmarcus | 2019-05-03 |

print(paste0("objective=", flash_fit$objective))[1] "objective=-704982.55805766"print(paste0("est_sd=", sqrt(1 / flash_fit$fit$tau)))[1] "est_sd=0.503265123673624"print(plot_covmat(Lhat %*% t(Lhat)))

| Version | Author | Date |

|---|---|---|

| 44a10f7 | jhmarcus | 2019-05-05 |

High Noise

Now the standard deviation of the errors is 10 times higher than the original simulation

n_per_pop = 20

sigma_e = 1.0

p = 10000

sim_res = simpler_tree_simulation(n_per_pop, p, sigma_e)

Y = sim_res$YGreedy

flash_fit = flashier::flashier(Y,

greedy.Kmax=10,

prior.type=c("nonnegative", "point.normal"),

ebnm.param=list(fixg=TRUE, g=list(pi0=0, a=1, mu=0)),

var.type=0,

backfit="none")Initializing flash object...

Adding factor 1 to flash object...

Adding factor 2 to flash object...

Adding factor 3 to flash object...

Adding factor 4 to flash object...

Adding factor 5 to flash object...

Adding factor 6 to flash object...

Adding factor 7 to flash object...

Factor doesn't increase objective and won't be added.

Nullchecking 6 factors...

Wrapping up...

Done.Lhat = flash_fit$loadings$normalized.loadings[[1]]

p_res = plot_flash_loadings(flash_fit, n_per_pop)

print(p_res$p1)

print(p_res$p2)

print(paste0("objective=", flash_fit$objective))[1] "objective=-1240334.83467024"print(paste0("est_sd=", sqrt(1 / flash_fit$fit$tau)))[1] "est_sd=1.02976540887562"print(plot_covmat(Lhat %*% t(Lhat)))

The greedy solution still recovers the loadings well!

Backfit

flash_fit = flashier::flashier(Y,

flash.init = flash_fit,

prior.type=c("nonnegative", "point.normal"),

ebnm.param=list(fixg=TRUE, g=list(pi0=0, a=1, mu=0)),

var.type=0,

backfit="final",

backfit.order="dropout",

backfit.reltol=10)Initializing flash object...

Adding factor 7 to flash object...

Factor doesn't increase objective and won't be added.

Backfitting 6 factors...

Nullchecking 6 factors...

Factor 4 removed, increasing objective by 1.394e+01.

Factor 6 removed, increasing objective by 1.183e-01.

Adding factor 7 to flash object...

Factor doesn't increase objective and won't be added.

Backfitting 6 factors...

Nullchecking 6 factors...

Wrapping up...

Done.Lhat = flash_fit$loadings$normalized.loadings[[1]]

p_res = plot_flash_loadings(flash_fit, n_per_pop)

print(p_res$p1)

print(p_res$p2)

print(paste0("objective=", flash_fit$objective))[1] "objective=-1208591.98076189"print(paste0("est_sd=", sqrt(1 / flash_fit$fit$tau)))[1] "est_sd=0.999960677283949"print(plot_covmat(Lhat %*% t(Lhat)))

Now the backfitting is looking like the results of the previous simulation I ran with Binomial noise etc in Simple Tree Simulation. So perhaps backfitting is having a hard time in the face of “high” noise scenarios and somehow greedy is robust to this noise? Either way backfitting seems to give a fitted covariance matrix that look very close to the truth across all the simulations so it is indeed fitting the data well.

Backfit (bimodal)

flash_fit = flashier::flashier(Y,

greedy.Kmax=10,

prior.type=c("nonnegative", "point.normal"),

ash.param=list(fixg=FALSE, g=bimodal_g),

ebnm.param=list(fix_pi0=TRUE, g=list(pi0=0.0)),

var.type=0,

backfit="final")

Lhat = flash_fit$loadings$normalized.loadings[[1]]

p_res = plot_flash_loadings(flash_fit, n_per_pop)

print(p_res$p1)

print(p_res$p2)

print(paste0("objective=", flash_fit$objective))

print(paste0("est_sd=", sqrt(1 / flash_fit$fit$tau)))

print(plot_covmat(Lhat %*% t(Lhat)))This throws this error so I don’t evaluate it:

Error in if (!all(nonzero_cols)) { :

missing value where TRUE/FALSE neededBackfit (bimodal, FLASHR)

data = flash_set_data(Y)

f = flash(data,

Kmax=10,

var_type="constant",

ebnm_fn=list(l="ebnm_ash", f="ebnm_pn"),

ebnm_param=list(l=list(fixg=FALSE, g=bimodal_g),

f=list(fix_pi0=TRUE, g=list(pi0 = 0))),

backfit=TRUE)

l_df = as.data.frame(f$fit$EL)

colnames(l_df) = 1:ncol(l_df)

l_df$ID = 1:nrow(l_df)

l_df$pop = c(rep("Pop1", n_per_pop), rep("Pop2", n_per_pop),

rep("Pop3", n_per_pop), rep("Pop4", n_per_pop))

gath_l_df = l_df %>% gather(K, value, -ID, -pop)

p = ggplot(gath_l_df, aes(x=ID, y=value, color=pop)) +

geom_point() +

facet_wrap(K~., scale="free") +

theme_bw()

p

sessionInfo()R version 3.5.1 (2018-07-02)

Platform: x86_64-apple-darwin13.4.0 (64-bit)

Running under: macOS 10.14.2

Matrix products: default

BLAS/LAPACK: /Users/jhmarcus/miniconda3/lib/R/lib/libRblas.dylib

locale:

[1] en_US.UTF-8/en_US.UTF-8/en_US.UTF-8/C/en_US.UTF-8/en_US.UTF-8

attached base packages:

[1] stats graphics grDevices utils datasets methods base

other attached packages:

[1] RColorBrewer_1.1-2 flashr_0.6-3 flashier_0.1.1

[4] ashr_2.2-37 tidyr_0.8.2 dplyr_0.8.0.1

[7] ggplot2_3.1.0

loaded via a namespace (and not attached):

[1] softImpute_1.4 tidyselect_0.2.5 xfun_0.4

[4] purrr_0.3.0 reshape2_1.4.3 lattice_0.20-38

[7] colorspace_1.4-0 htmltools_0.3.6 viridisLite_0.3.0

[10] yaml_2.2.0 rlang_0.3.1 mixsqp_0.1-115

[13] pillar_1.3.1 glue_1.3.0 withr_2.1.2

[16] foreach_1.4.4 plyr_1.8.4 stringr_1.4.0

[19] munsell_0.5.0 gtable_0.2.0 workflowr_1.2.0

[22] codetools_0.2-16 evaluate_0.12 labeling_0.3

[25] knitr_1.21 doParallel_1.0.14 pscl_1.5.2

[28] parallel_3.5.1 Rcpp_1.0.0 scales_1.0.0

[31] backports_1.1.3 truncnorm_1.0-8 fs_1.2.6

[34] gridExtra_2.3 digest_0.6.18 stringi_1.2.4

[37] ebnm_0.1-17 grid_3.5.1 rprojroot_1.3-2

[40] tools_3.5.1 magrittr_1.5 lazyeval_0.2.1

[43] tibble_2.0.1 crayon_1.3.4 whisker_0.3-2

[46] pkgconfig_2.0.2 MASS_7.3-51.1 Matrix_1.2-15

[49] SQUAREM_2017.10-1 assertthat_0.2.0 rmarkdown_1.11

[52] iterators_1.0.10 viridis_0.5.1 R6_2.4.0

[55] git2r_0.23.0 compiler_3.5.1